When shared-nothing scale-out storage was the next big idea, we heard a lot about the promise of RAIN (Redundant Arrays of Independent Nodes) clusters that would logically live forever. When products actually came to market, they never really delivered on that promise because those products could only create a cluster, or a storage pool, if all the nodes in the cluster were identical.

From HCI solutions like Nutanix and VSAN to SolidFire and PowerScale (FKA Isilon), shared-nothing solutions have been based on symmetrical clusters of identical nodes to create the storage pools they do load-balancing and data protection across. Some, like PowerScale (FKA Isilon), federate multiple storage pools into a common namespace but that creates problems of its own.

The most obvious problem with symmetric clusters is that they lock users into a fixed compute/storage ratio. Once a customer uses nodes holding 15 3.84TB drives, they have to expand their system with those nodes even if they would prefer fewer nodes with 15.36TB drives for denser capacity or more nodes with 1.6TB NVMe SSDs for performance.

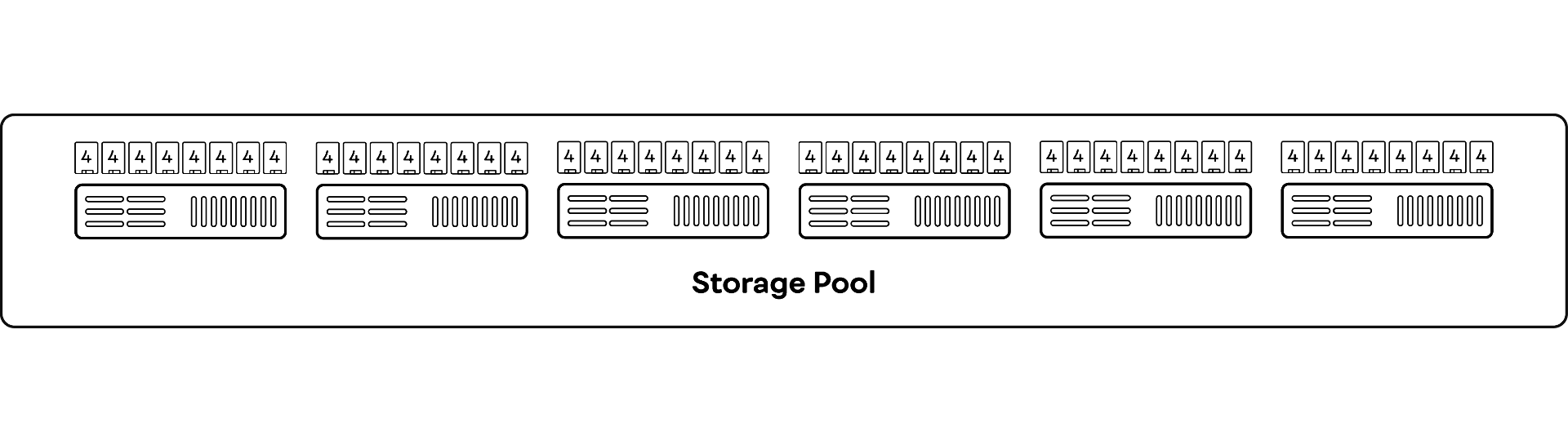

Symmetric Shared-Nothing Cluster

Storage systems require symmetric clusters because symmetric clusters are simple. When all the nodes have the same capacity and performance, the system can load balance by simply writing data evenly across the nodes. Asymmetric clusters require more sophisticated load balancing.

Symmetric clusters also force customers into difficult decisions when their vendors release new generations of nodes. Once their vendor releases shiny new Gen4 nodes, any customer who needs to expand their cluster of 16 Gen3 nodes must choose between:

Buying four more Gen3 nodes even though they’re already obsolete

Replacing their existing Gen3 Nodes with 10 Gen4 nodes even though they have a year or two of life and/or prepaid support left

Adding a pool of 2 Gen4 nodes

Expanding with obsolete nodes is the least expensive option this year, but when the oldest nodes in the cluster reach their five-year end of life, the few old nodes bought this year will become a small orphan pool or be retired with a year or two of life remaining.

While adding a pool of new nodes may appear attractive, remember that each storage pool is a separate performance and failure domain. That means the new pool must have enough nodes to use erasure code stripes wide enough to provide decent efficiency, which could be 12-20 nodes.

More importantly, more pools are just more trouble to manage, as users have to create policies to migrate data from tier (pool) to tier, and that migration traffic consumes some of the system’s performance.

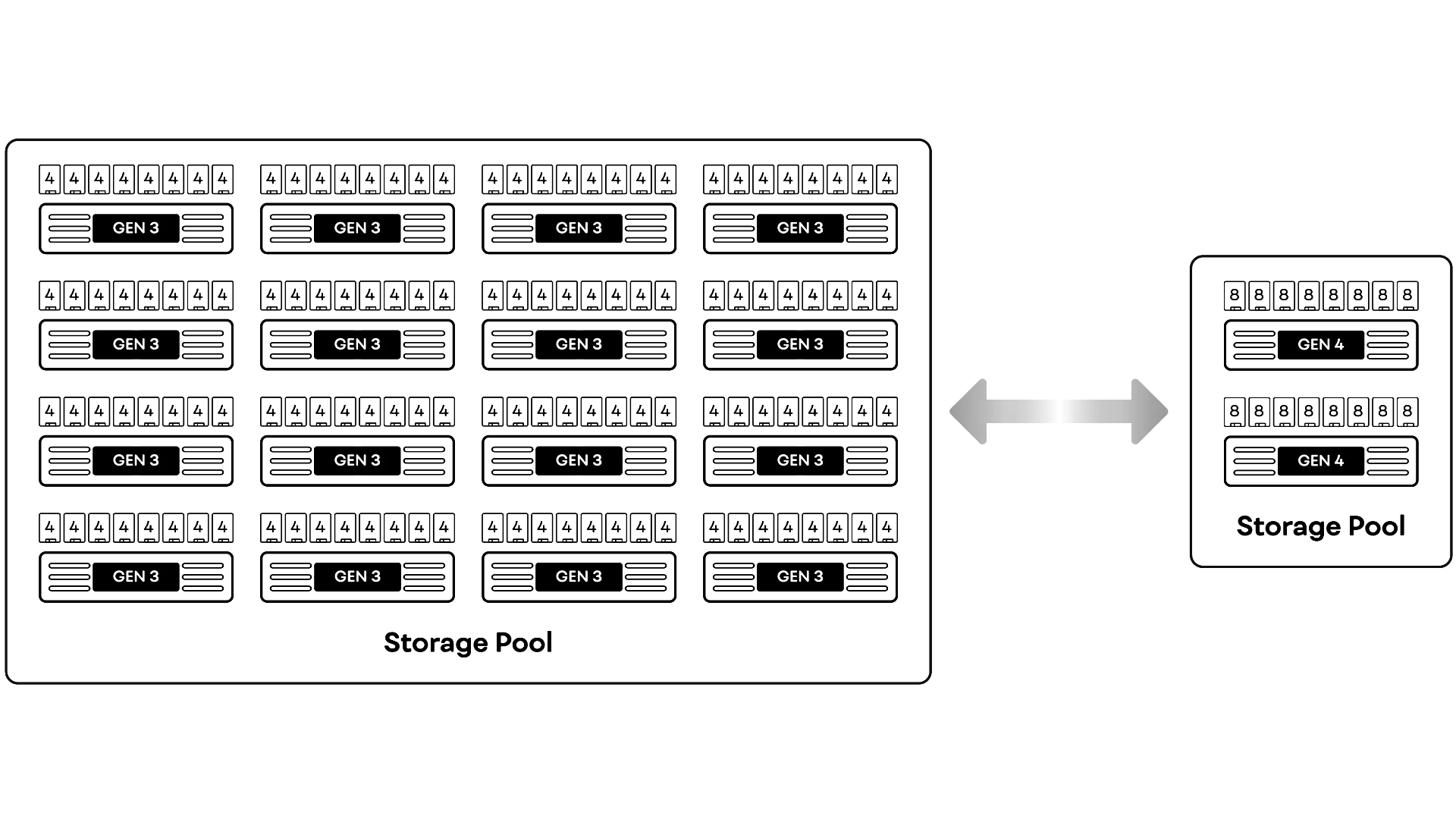

VAST Asymmetry

VAST systems form a single cluster and a single storage pool across storage enclosures holding different numbers of different size SSDs and front end servers with different numbers of cores or even different CPU architectures. This allows VAST users to run clusters with multiple generations of VAST hardware seamlessly.

In VAST’s DASE architecture, all the SSDs are shared and directly addressed by all the front-end protocol servers via NVMe-oF. VAST’s data placement methods operate at the device, not the node/enclosure level. The system selects the SSDs to write each erasure-coded stripe across based on the performance, load, capacity, and endurance of all the SSDs in the system. This load balances across enclosures holding SSDs of different capacities and performance levels.

The system similarly load-balances across front-end protocol servers of different performance levels by resolving DNS requests and assigning shards of system housekeeping to the protocol servers with the lowest CPU utilization.

All of this allows VAST clusters to create a single, load-balanced namespace across heterogeneous protocol servers, enclosures and SSDs of multiple generations. VAST users simply join new servers and/or enclosures to their clusters, and evict appliances when they reach the end of their useful lives.