Achieve high bandwidth performance with NFS enhancements from VAST Data

What was old is new again! And again! I wrote this blog in Oct 2021 setting the stage for VAST to explode into the market. Fast forward to 2026, VAST has grown 300-400% a year to become a behemoth in the arena of AI and HPC Platforms, capturing the most NeoClouds, model-trainers, National Labs, higher-ed and other high-performance computing centers across the world.

VAST grew >100x in the past 4 years!! So it’s time for an update to understand why. Strap in for a fresh look at NFS - the changes we have made in leveraging the venerated NFS protocol are astounding.

The Network File System protocol is the grizzled remote data access veteran harking back to 1984 and has been a tried and tested way to access data from another server, while preserving the hierarchical file and directory semantics native to Unix and Linux. Being a Layer 7 protocol in the OSI stack, modern implementations of NFS can use TCP or RDMA as the underlying transport across the network.

In this article, we’ll examine some of the innovative work VAST Data has delivered to our customers for NFS - I will not distinguish much between NFS3 and NFS4 here, although massive additions have been made to NFS4 as well. A more detailed discussion of our implementation of NFS4 features can be found in this blog post.

NFS is well defined in the standards community in RFC 1813 and while its availability is almost universal across operating systems and networks, it has never enjoyed a solid reputation for high bandwidth performance. I often hear customers say: “Isn’t NFS a very slow and unreliable file system”? My cheeky answer is always “NFS is not a file system, it’s a protocol - what matters is what you do at the endpoints.”. VAST implements NFS that is blazing fast, and rock-solid in reliability with crazy good linear scaling.

Enter VAST Data

We figured throwing out the baby with the bath water made no sense. Why not leverage the simplicity, solid install base, and standards-based implementation of NFS and improve the performance and availability to get the best of both worlds. The complexities of parallel file systems simply aren’t worth the hassle when NFS can be equally performant.

[Editorial rant: VAST Data is a software company. The VAST Data core architecture (DASE) maps a set of docker containers (with our code) to a set of NVMe devices. The form factor for what holds the containers or devices is flexible, and we have OEM relationships with a variety of providers. The front end containers can run on a server (CNode) , or in a Bluefield NIC, or in the same server with the NVMe devices (Ebox). We operate the same.

So I groan when someone asks “how fast our box is” - clearly showing me that we have failed to accurately communicate how VAST Data works. VAST Data is not a box company. The correct answer is “as fast as you need” - we can build systems to match any requirements. We have production systems with 10’s of thousands of nodes, up to an exabyte in a single namespace, operating in excess of 10 TB/s (yep - big B=Byte). What we discuss below is independent of the form factor and will keep improving as HW becomes more capable.]

This article examines the different methods of accessing data from a VAST Cluster using NFS that help you deliver high bandwidth performance, which are game-changing especially in your GPU accelerated environments.

VAST Data supports 4 different modes of NFS, and the same client can use any combination of these at the same time for different mount points. They differ in the underlying transport protocol (TCP vs RDMA) and the introduction of new features in the upstream Linux kernel for NFS around multiple TCP connections between the client and storage system.

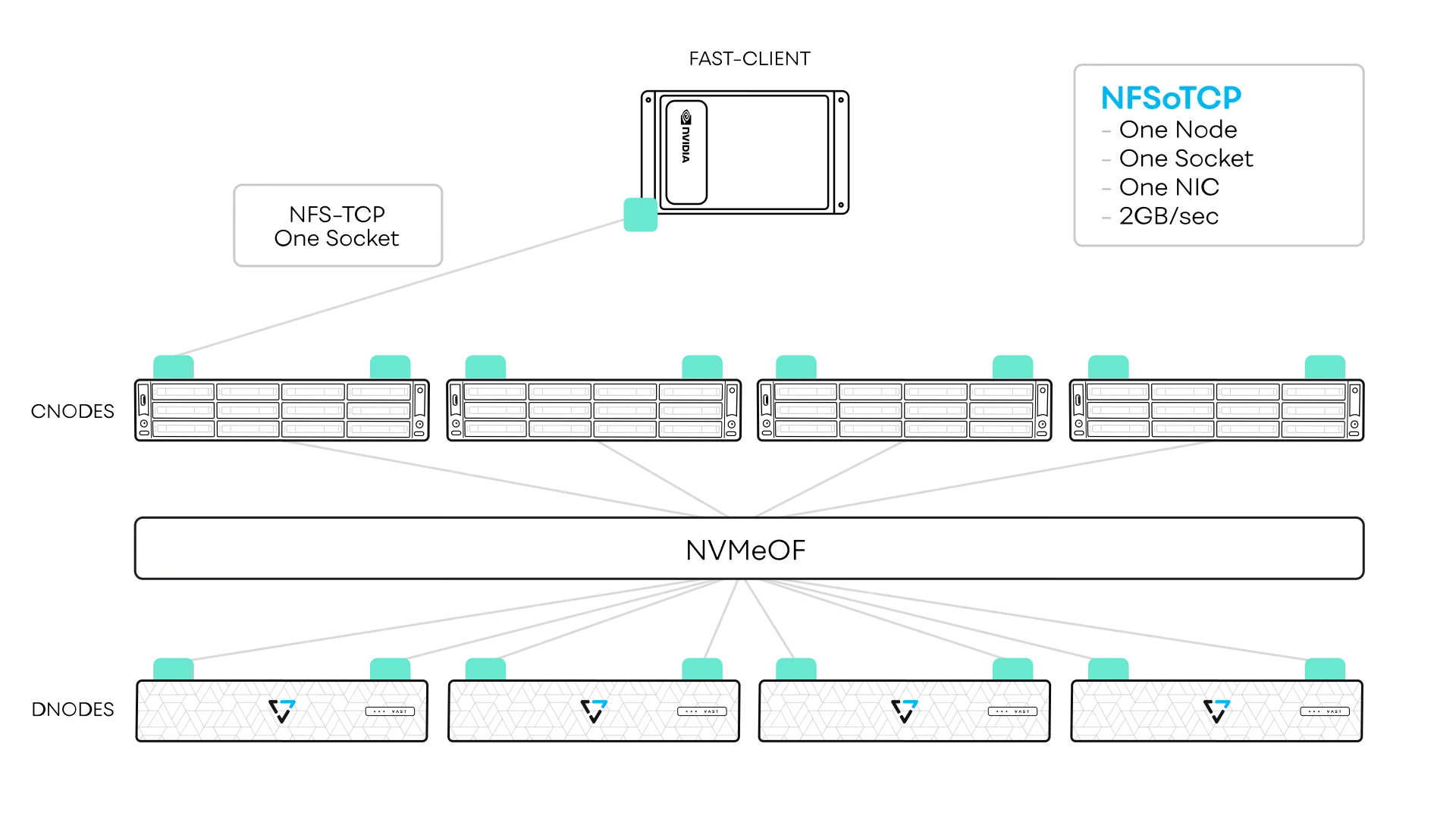

NFS/TCP

This is the existing standards based implementation present in all Linux kernels. This sets up one TCP connection between the client port and one storage port, and uses TCP as the transport for both metadata and data operations.

# This is an example mount commands (1 local ports to remote ports):

sudo mount -o proto=tcp,vers=3 172.25.1.1:/ /mnt/tcp

# This is standards based syntax for NFS/TCP - the proto=tcp is default.While the easiest to use and requiring no installation on non-standard components, this is also the least performant option. Typically we see about 2- 3 GB/s per mount point for this with large block IOs. Performance in this case is limited for two reasons: (a) traffic is sent to a single storage server port , and (b) the upstream client is single-threaded using 1 TCP connection per session, and once it saturates the client machine CPU core, no more performance gains.

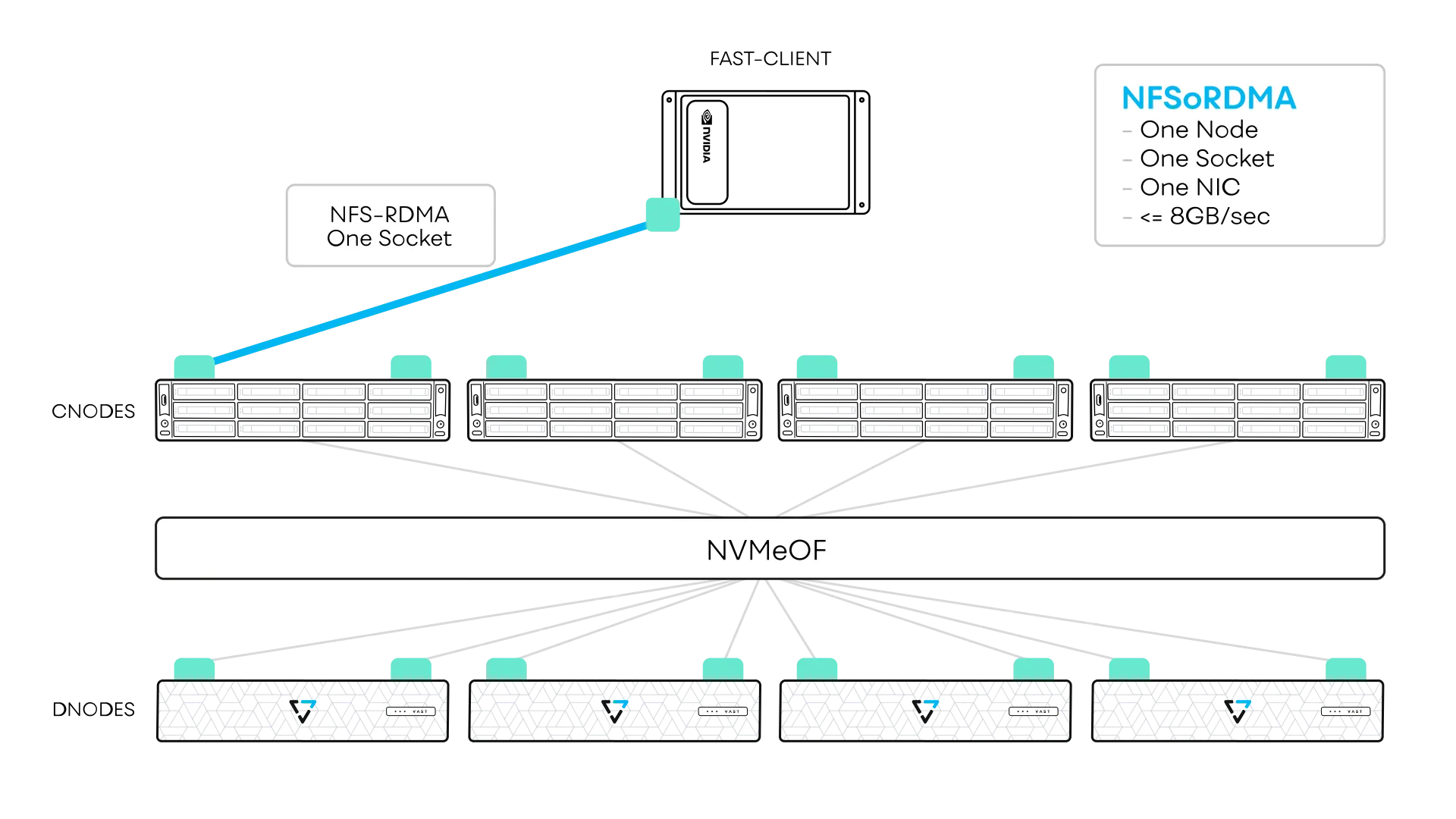

NFS/RDMA

This too has been a capability in most modern Linux kernels for many years. Here the connection topology is still the same - single connection between one client port and one storage port - however the data transfer occurs using RDMA thus increasing the throughput. The use of RDMA bypasses the TCP socket limitations. This implementation requires:

an RDMA-capable NIC e.g. NVIDIA ConnectX or Bluefield

Jumbo frame support in the RDMA network

an OFED from NVIDIA (Mellanox OFED or the DOCA OFED for Bluefield NICs).

Modern linux kernels have “in-box” drivers for RDMA, but VAST can also provide an enhanced version for RDMA capabilities that enables other capabilities as well. VAST server side support for this is available on both x86 and ARM CPUs, for IB and Ethernet (RoCEv2) and multiple linux distributions (Fedora & Debian forks), {D,M}OFEDs, and linux kernels spanning over 10+ years.

# This is an example mount for NFS/RDMA command (1 local ports to fremote ports):

sudo mount -o proto=rdma,port=20049, vers=3 172.25.1.1:/ /mnt/rdma

# This is standards based syntax for NFS/RDMA. Port 20049 is also standard for NFS/RDMA and is implemented in VAST

With NFS/RDMA, we are able to achieve 8-8.5 GB/s per mount point with large IOs (1 MB), while IOPS remains unchanged relative to the standard NFS over TCP option.

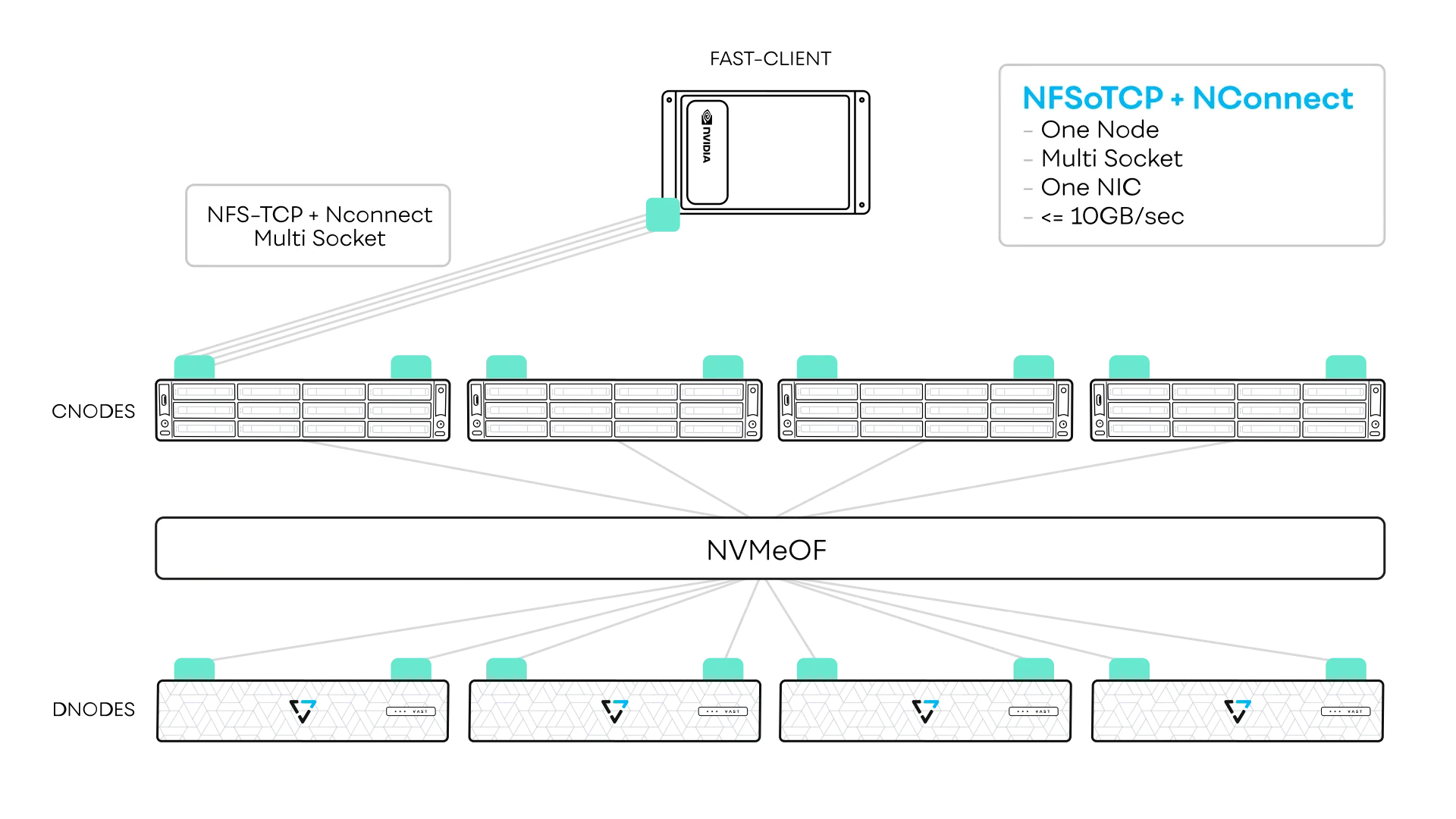

NFS/TCP (or RDMA) with nconnect:

This is an upstream kernel feature but exclusive to Linux kernels after 5.3. This requires no specialized hardware (no RDMA, NICs,...but can work with RDMA NICs as well) and works “out-of-the-box”. Here the NFS driver allows for multiple connections between client and one storage port - controlled by the nconnect mount parameter. Up to 16 connections can be created between the client and storage port with nconnect in the upstream kernel (VAST provides a client that supports up to 64).

The transport protocol is TCP or RDMA under NFS, and using nconnect allows for multiple connections across multiple cores in the client CPUs. I should emphasize that these are NOT dedicated cores (which some vendors require for good performance with their native DMA clients) and the overall CPU overhead is extremely low (low single digit percentage) even at full throttle.

# This is an example mount command for kernel 5.3+ nconnect NFS/TCP (1 local ports to 1 remote ports):

sudo mount -o proto=tcp, vers=3, nconnect=8 172.25.1.1:/ /mnt/nconnect

# This is standards based syntax for NFS/TCP. Note that nconnect is limited to 16.

# Once again, the proto=tcp is default - the command will simply not work for the wrong kernels.

# The default port is specified as 20048 for nconnect and is implicit - use the "-v" flag for details if curiousThe upstream kernel nconnect feature can provide close to line bandwidth for a single 100 Gb NIC. For example, we can achieve 11 GB/s on a single mount point with this on a Mellanox ConnectX-5 100 Gb NIC. These days, we have NVIDIA CX-7 NICs capable of 200 Gbps/port on the front-end Cnodes, so even higher speeds are possible with just nconnect using higher speed client NICs.

Multipath NFS/RDMA or NFS/TCP:

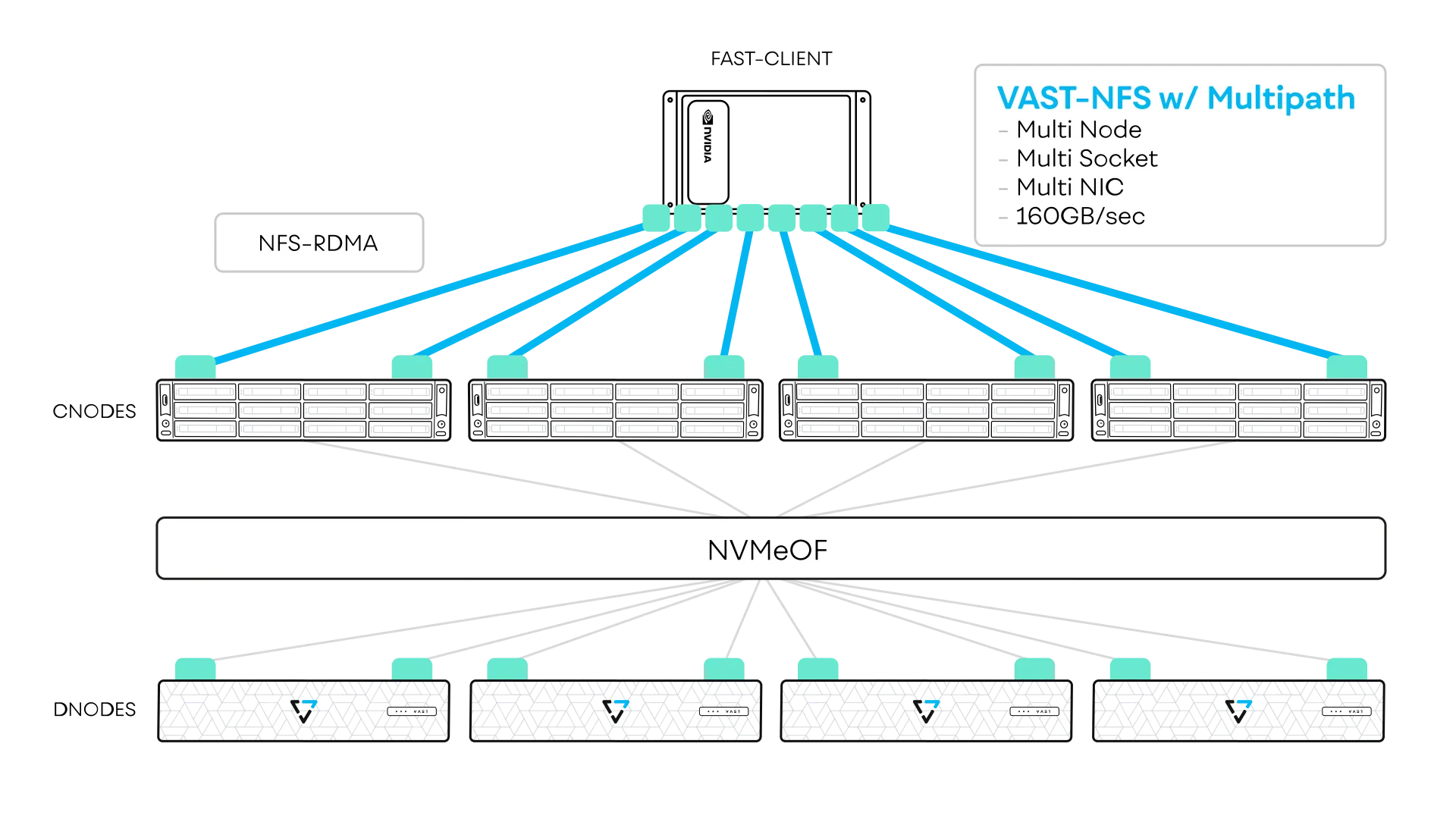

By now, the astute reader would have figured out that all the three options we have discussed have a common topological constraint - each client NIC port can only talk to one server NIC port, thus limiting overall performance to a mount point to what a server NIC can deliver.

What if ALL the client NIC ports on a node could talk to ALL the server NIC ports? Magic! Every front-end node in a VAST Cluster can access the same namespace, after all, so IOs can be issued to any node. Theoretically (and in practice) we can line saturate every set of client NICs and therefore deliver full line speed to a single mount point on the client, long as the VAST cluster is sized to deliver this.

This core ability is what we refer to as “multipath”. And people thought (and many will still insist) that NFS was “slow” and that a parallel FS was needed to do this, with proprietary DMA clients! VAST NFS has irreversibly smashed that misconception. This was the breakthrough (5 years ago) that made VAST the first NFS server to pass SuperPOD certification. More on this later.

First, nconnect here provides the ability to have multiple connections between the client and the storage – however, the connections are no longer limited to a single storage port, but can connect with any number of storage ports that can serve that NFS filesystem. Load balancing and HA capabilities are built-in into this feature as well, and for complex systems with multiple client NICs and PCIe switches, NIC affinity is implemented to ensure optimal connectivity inside the server.

A key differentiator with Multipath is that the nconnect feature is no longer restricted to the 5.3+ kernels, but has been backported to lower kernels (3.x and 4.x) as well, making these powerful features available to a broad ecosystem of deployments. Typical mount semantics differ from normal NFS mounts in a few ways. See example below.

# This is an example mount commands (4 local ports to 8 remote ports): sudo mount -o proto=rdma,port=20049, vers=3, nconnect=8, localports=172.25.1.101- 172.25.1.104, remoteports=172.25.1.1-172.25.1.8 172.25.1.1:/ /mnt/multipath

# The code changes in this repository add the following parameters localports remoteports A list of IPv4 addresses for the local ports to bind A list of IPv4 addresses for the remote ports to bind

IP addresses can be given as an inclusive range, with '-' as a delimiter, e.g. 'FIRST-LAST'. Multiple ranges or IP addresses can be separated by '~'.The performance we were able to achieve for a single-client single-mount, 5 years ago, far exceeded any other approach, allowing us to pass NVIDIA BasePOD and SuperPOD reference architecture certifications easily. We saw up to 162 GiB/s (174 GB/s) on systems with 8x200 Gbps NICs with GPU Direct Storage, with a single client DGX-A100 System.

Today with much more powerful servers and client NICs, this is a moving target. However, in every case we have tested we have been able to line saturate the connectivity to the client on a single mount point.

Additionally, as all the C-nodes participate to deliver IOPS, an entry level 4 C-node system (with the latest AMD Turin CNodes) is able to deliver over 1.1 Million 4K IOPS to a single client/single mount point/single client - again a moving target but as new processors advance, so do we. We are designed to scale this performance linearly as more C-nodes participate.

The VAST NFS Client

All the above just highlights the performance gains for IOPS and Bandwidth - this was just the first step in our journey to enhance NFS in an open fashion for the world. The implementation of the VAST NFS Client that makes multipath NFS and all its advanced features is worth examining now. The reader is urged to take a look at the documentation for the VAST NFS Client to learn more details, but lets discuss some of the very cool things the VAST R&D teams have developed. Some crucial facts about teh VAST NFS Client: The code is 100% open-source, free and is not tied to the VAST AI OS at all, unlike a native DMA client. So much so that other vendors have now started to use it to get around limitations of their NFS implementations to achieve their own SuperPOD certifications.

They say imitation is the best form of flattery, and we welcome it! This was the intent behind open sourcing the code, so we could make NFS better for everyone, not just our customers. We have contributed several sections to the upstream Linux community as well.

Some salient features:

Client support for Intel (x86-64), AArch64 (ARM), RISC-V, POWER, S390x and more

Support for both NFS3 and NFS4 (later kernels only)

Support for NFS with TLS for RHEL 9/Rocky 9 and Ubuntu 24.04

vastnfs-ctl utility for providing a simple control plane for the client installation, monitoring and configuration.

Many new mount options have been developed beyond the localports/remoteports I have written about. Some of the more important ones (see details):

forcerdirplus: This option issues READDIRPLUS metadata calls which are advantageous over READDIR in directory listing use cases

noextend: Turns off the default extend-to-page optimization in writes, which allows for more efficient write IOs - particularly useful for HPC MPI-IO codes.

spread_reads and spread_writes: Controls whether a single file IO should use a single or multiple connections. Using this is often very useful to balance all the front-end IO from multiple clients evenly across all the cnode ports on the cluster.

relmtime: Ensures that stat() calls are not blocked wanting for pending writes.

There are many more options to study and experiment with. These improve not just IO performance but metadata performance as well, extending the performance gains that many applications need. Many HA features (such as noidlexprt, localports_failover etc) as well as usability features (such as being able to specify localports using interface names instead of IP addresses - super useful in large GPU cluster automation) have been added as well to enhance stability and ease-of-use.

Conclusion

These connectivity options for NFS are powerful methods to access data over a standards based protocol, NFS. The modern variants, and the innovation that VAST Data has brought to the forefront, has changed the landscape of what NFS is capable of.

All these approaches are available with the VAST Data AI OS. Some are standard, some have kernel limitations, and some need RDMA support with some (free) software from VAST Data. VAST Data is constantly working with NFS upstream maintainers to contribute our code to the Linux kernel.

General References