First, let me say that as an emerging player in the storage market, VAST Data started with only respect and reverence for the early incarnation of Pure Storage. The early leadership team built a great company that executed in a way that can only be described as… great. Pure was the company we looked up to when we started building VAST.

Fast forward 10 years later, and much of that core team has since gone. While some Puritans have since converted to VASTronautism, others have gone off to different pastures. What is left is, well… I’m not sure. But what is clear though is that the shine from the early days of Pure has worn off of the apple.

It’s with both a sense of pride and disappointment that I report today on Pure’s newest FlashBlade//S unveiling. I’m proud because the student is now teaching the master – and Pure is clearly now taking pages from the VAST playbook. Imitation = flattery is the best evidence that we are doing something right. I’m also sad because the announcement they’ve made today is not only disingenuous, it is also uninspiring. It’s simply: not great.

Some context: Since we started selling in 2019 – we generally do not lose deals to FlashBlade, especially if the choice was based on technical merits. As a technologist, I believe that there are only disadvantages to FlashBlade vs the VAST Data Platform when you stack up our respective features, price and scaling/performance capability. But, Pure also has a larger global sales footprint and leverages the portfolio sale to get FlashBlade into the door when customers choose their exceptional FlashArray product. We’re not everywhere, and not every customer buys on the basis of technical merit.

As we’ve been on our winning streak, Pure has been busy prepping this new announcement for months now, and we were all anxious to understand if they’d bring the thunder. I could spend 3 pages writing about all of the wrongs in today’s FlashBlade//S announcement, but I’m just going to say a few things and then go back to slugging it out in the customer boardroom.

Point 1: The disaggregated & stateless claims made by Pure are utterly ridiculous

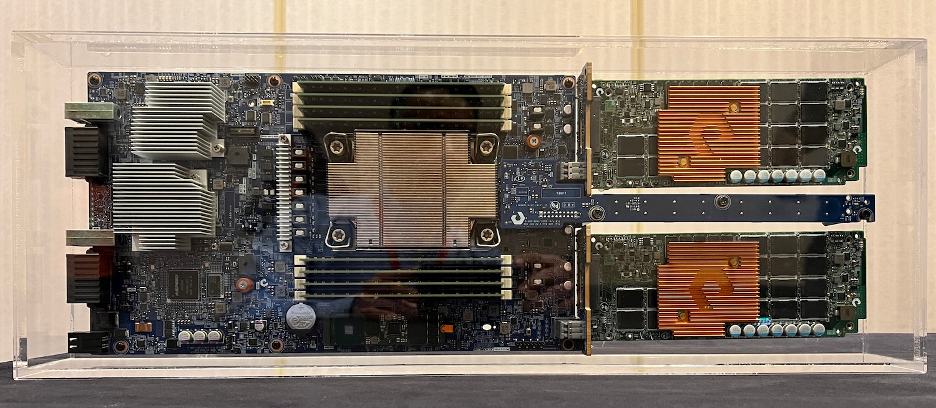

When a server is connected to an SSD, and the only way to access that SSD is through that server, that server is decidedly stateful. With Pure’s new offering, there is no network-attached access to a logical pool of devices over disaggregated transports (such as NVMe over fabrics) and when that server fails, the devices attached to it are no longer present in a cluster. Pure today announced a “disaggregated architecture”, but what they really mean is that blades now borrow from the same SSDs used by the FlashArray product line and customers have a choice of drive configuration. They’ve normalized their supply chain and have tried to skin it as an architecture improvement. In reality, Pure has now caught up to the Isilon of 2003 with this move, which is when Isilon first introduced nodes with different direct-attached drive options. That’s a step forward from having blades with NAND soldered onto them, but still leaves a lot to be desired.

A true disaggregated architecture can add CPUs without adding storage, saving money when only FLOPs are needed or saving money when only capacity is needed.

A true disaggregated architecture doesn’t force customers to choose between compression efficiency and performance. You can have both when CPUs are independently scalable.

A true disaggregated architecture doesn’t go into degraded mode when a node goes offline. In Pure’s case, when a blade fails as much as 192TB disappears from the cluster until that node returns to full health. This is the precise definition of direct-attached.

We announced the world’s first Disaggregated, Shared-Everything architecture in 2019, and this architecture continues to pay dividends in terms of parallelism, lack of cache coherency deadlocks, the ability to execute global efficiency algorithms, true independent scaling of CPUs and NVMe devices… the list goes on. If you want to learn more, read our white paper.

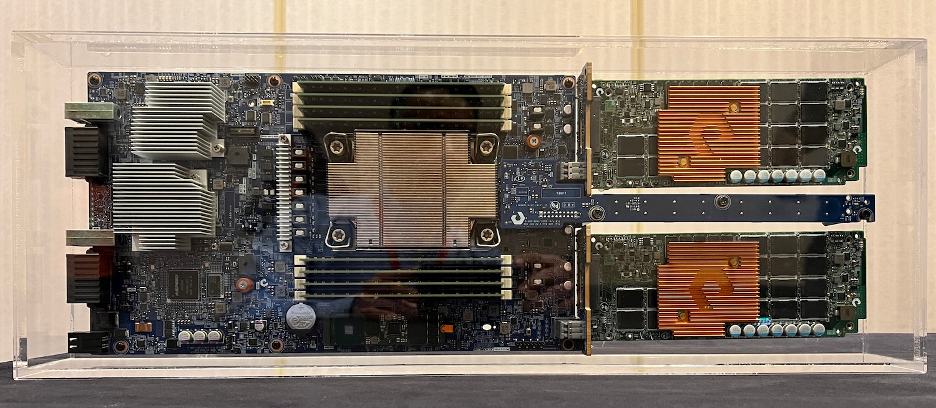

This is a server with direct-attached storage devices on it (aka: a FlashBlade).

Point 2: Pure’s new exabyte-scale system can scale all the way to 20PB

[jeff whips out his TI calculator] [jeff also sucks at math, got an F in calculus… twice]

20PB is 1/50th of an exabyte. At scale, FlashBlade//S clusters can support (wait for it..) 400 drives across 10 chassis.

I… can’t… grrr…

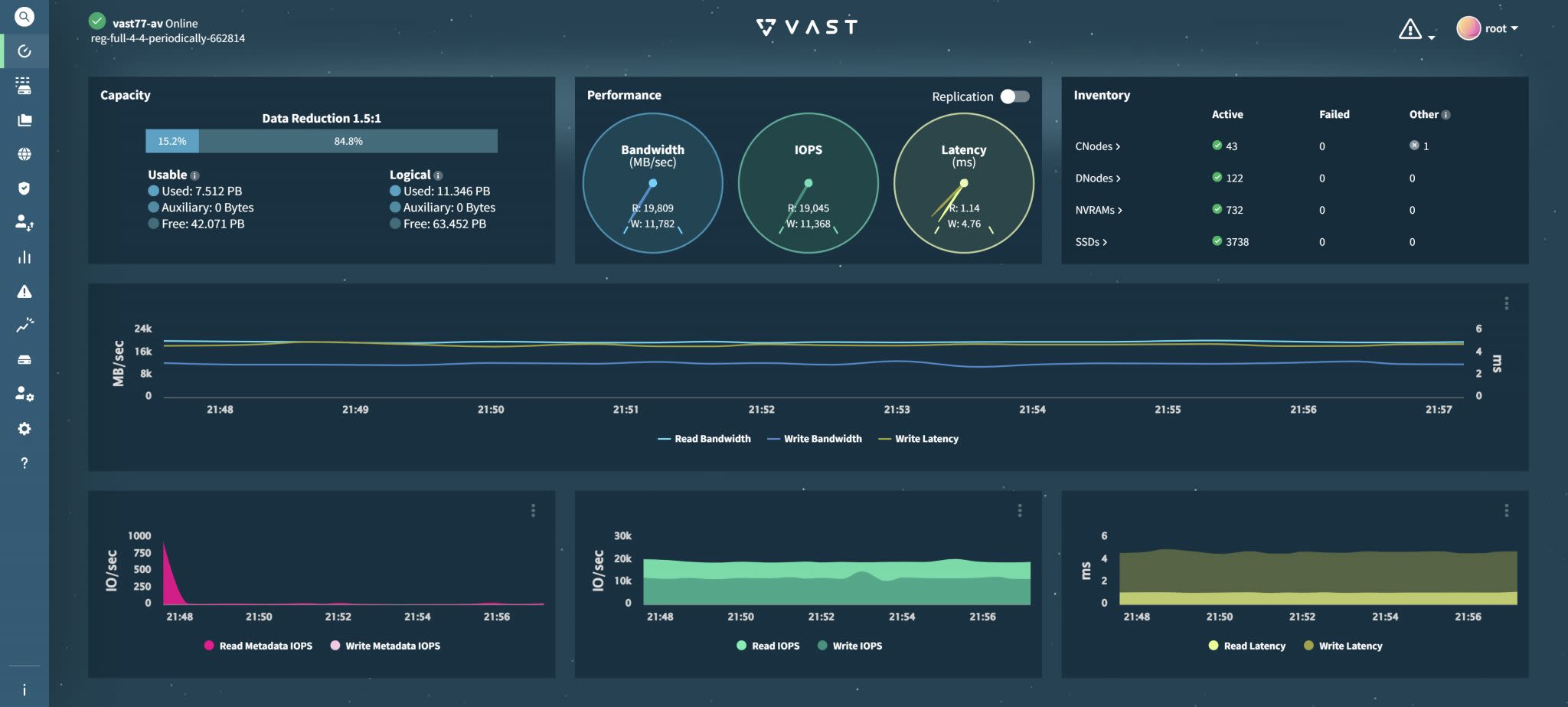

OK. One of our team members just took a snapshot of a relatively quiet QA system… Here’s a VAST cluster running with nearly 4,000 NVMe devices. 10x Pure’s max.

This is what a cloud of flash using composable, disaggregated storage processors should look like. Oh, and to Point #1, each of our containers (CNodes) are mounting all 3,728 SSDs simultaneously. Our shared-everything architecture is the basis for an embarrassingly-parallel level of scale. Without the classic communication across a bunch of direct-attached nodes as shared-nothing systems have, scale is born from a new and fundamentally simpler system architecture.

Customers are now routinely coming to VAST for 100PB+ clusters. These organizations demand data management simplicity at scale and want to avoid silos/islands of information that come from having small and limited storage clusters. They also want to be able to support full SSD chassis failure, all of which is possible with VAST – but not with Pure. Data growth is a constant, and customers shouldn’t have to plan around architecture constraints or resilience limitations.

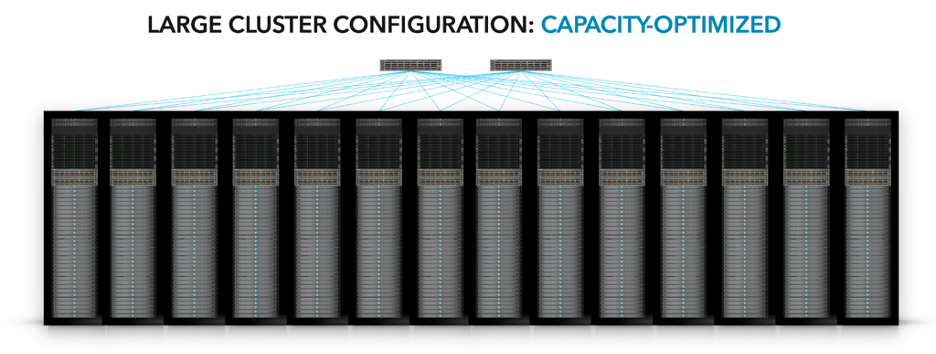

If you’re going to advertise an “exabyte-scale system”, it should actually be able to support exabytes of capacity… and they should achieve exabyte-caliber rack-scale resilience. Here’s what an exabyte-class VAST cluster actually looks like, it’s one we’re architecting for an AI hyperscale project:

900PB @ 3:1 • 5TB/s of performance • 14 Racks • 270W/PB

Point 3: The VAST SCM “crutch” is our not-so-secret advantage

I love Chris Mellor… you always get the direct read-out from the vendor.

No SCM crutch

as a Pure briefing slide said, possibly in a poke at VAST Data.

Source: blocks and files

Using storage class memory as a shared, global resource allows our system to:

Reduce QLC write latency by 90 percent: savings without the performance hit

Extend endurance & HW lifecycles for up to 10 years, which we guarantee

Unlock new efficiencies by building richer metadata structures that enable global compression on a shared resource that is a fraction of the cost of DRAM

Eliminate any volatile data storage and the need for supercap power protection

Provide the cluster the luxury of time to perform computationally intensive global data reduction inline, without having to wear down low-endurance QLC through some post-process operation after the data has already been written to flash

Pure “avoids” the need for SCM by relying on a far more volatile, expensive and limited crutch … which is DRAM. The limited space to manipulate data leaves them, 5 years later, without any meaningful data reduction story. Need evidence? Look again on the right at all of those super caps. Non-volatile systems such as VAST’s don’t need expensive memory, we don’t need supercaps or batteries.

supercaps. supercaps. supercaps. supercaps. supercaps. supercaps. all the supercaps!!!

Just ask them yourself.

Every Pure salesperson is out now evangelizing the merits of this architecture to customers new and old. We agree with their high-level selling points, as they are essentially evangelizing OUR architecture. That said, you can’t will a legacy shared-nothing architecture into becoming modern just by saying it’s modern over and over again. If you find yourself on the receiving end of a FlashBlade//S pitch, ask them a few simple questions:

Does my ‘disaggregated’ SSD stay online when a node has failed or is being rebooted?

When I Evergreen, am I also forced to take data offline and enter into a rebuild state?

Can I size CPUs truly independent of capacity, or do I need to buy CPUs as I add SSDs?

Can I compose pools of stateless CPUs to create true isolated QOS?

Can I survive rack scale failures? Can I survive a full chassis failure?

What if my data growth requires more than 20PB or 400 SSDs of capacity?

Where is any global data reduction that even remotely resembles VAST’s?

How does cache coherency stand up to parallel I/O?

Does Pure guarantee the endurance of their QLC for 10 years?

If you don’t use a memory crutch, what’s with all them supercaps?

How is this new evolution of FlashBlade different than what Isilon announced in 2003?

After five years of waiting for something new, we now see the limits of a shared-nothing direct-attached architecture and how architecture can really limit the evolution of a storage product. I think that’s the key word… “evolution”. While the FlashBlade//S announcement was billed as a “Revolutionary Platform”, today’s announcement is evolutionary, at best.

I miss the old Pure.

– Jeff