Introduction

The world of Artificial Intelligence was rocked circa 2012 by the use of GPUs in Neural Network processing by Geoffrey Hinton’s team at the University of Toronto – where Alex Krizhevsky’s Ph. D. thesis work won the ImageNet object recognition challenge using them. The results, as everyone knows, were stunning – a 15% increase in accuracy overnight compared to other machine learning techniques, and this started an explosion of research and applications in the world of Neural Networks for a variety of purposes, especially in the arena of Convolutional Neural Networks (CNNs), now referred to as Deep Learning.

While doing justice to this vast field is beyond the scope of this article, a good starting point for the reader is the Wikipedia Deep Learning article, which chronicles the history and current state of this field. Yoshua Bengio, Geoffrey Hinton, and Yann LeCun were awarded the ACM Turing Award for their seminal contributions to this field, and subsequently, Geoffrey Hinton was awarded the Nobel Prize in Physics. NVIDIA has been at the forefront of this revolution since 2009 with its GPUs as the backbone technology for much of these works.

As GPUs became more powerful, far outstripping advances in CPU processing, new bottlenecks appeared in processing architectures, where it became apparent that moving data between main memory (SYSMEM) and GPU memory, as well as GPU-GPU data movement, was increasingly a limiting factor in keeping the GPUs cores busy.

This prompted the introduction of a set of technologies from NVIDIA using Remote Direct Memory Access (RDMA) to bypass the SYSMEM/CPU complex to enable direct communication between GPUs, both within a server and with other servers, referred to as GPUDirect. This allowed multiple GPUs on a system, and across systems, to cooperate in training large models. But this still left a limitation in moving data from the IO subsystem to the GPU complex, which traditionally had to use SYSMEM as a “bounce buffer” to get data to GPU memory.

NVIDIA then began to develop an extension - GPUDirect Storage (GDS) – to enable direct movement of data from the storage subsystem to GPU memory when appropriate, bypassing SYSMEM. A good start to become acquainted with these technologies is the NVIDIA GTC 2020 Conference slides for MagnumIO and GPUDirect for Storage. For deeper details, please study the GPUDirect for Storage Design Guide.

So what is GPUDirect Storage (GDS)?

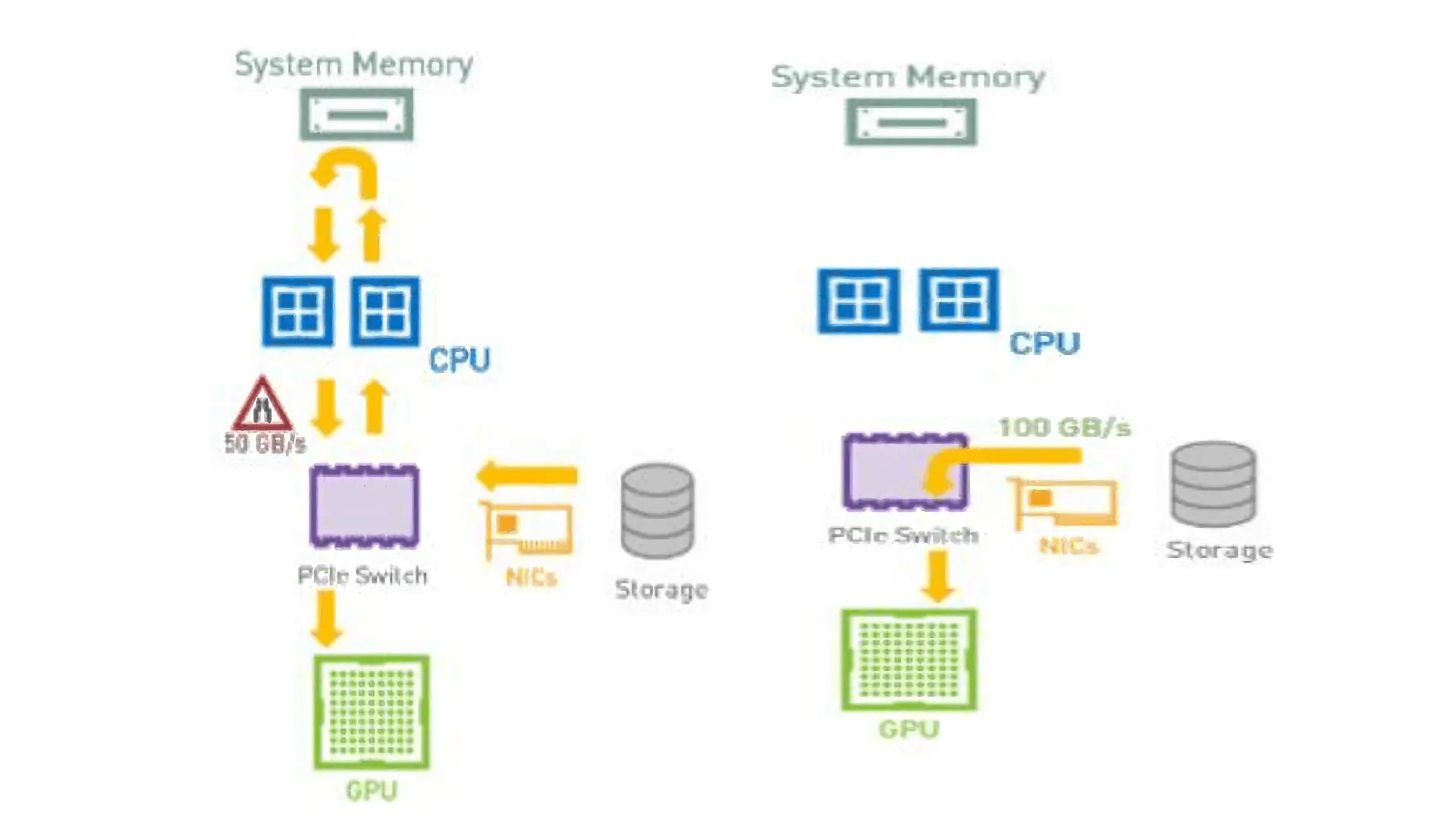

Traditional data flow from Storage to the GPU typically flows through a NIC to the CPU (through a PCIe Switch complex in the larger, purpose-built NVIDIA DGX Systems) and to SYSMEM, after which it is moved to the GPU memory for further processing, as shown in Fig 1. Unless some pre-processing is needed in the CPU, this is an unnecessary limitation in several ways.

First, it is an unneeded hop for the data between SYSMEM and GPU. Next, the operation required the use of precious CPU resources that could have been used for some other processing. Lastly, the aggregate bandwidth the storage can provide to the system is limited by the internal SYSMEM-GPU bandwidth, which is typically significantly less than the bandwidth a well-architected storage system can provide through the multiple NICs on the system. For example, on an NVIDIA DGX-2 system, we have observed that we can get a maximum of around 50 GiB/s on internal bandwidth, while the 8x100 Gb IB NICs can deliver close to twice that bandwidth.

GDS solves that issue elegantly, again, by exploiting DMA or RDMA capabilities from the storage. As Figure 2 shows, if the storage subsystem supports (R)DMA, it can be instructed to move data directly to a GPU Memory address as opposed to a SYSMEM memory address, thus bypassing the CPU/SYSMEM complex altogether. This permits data transfer to the system at near line speeds, as it is no longer limited by the internal memory bandwidth.

Enter VAST Data

VAST Data is a modern all-flash NVMe architecture that breaks the trade-off between performance, scale, and cost. By using a combination of NVMeoF, Storage Class Memory, and QLC NAND technology in a cache-less architecture, we have delivered record-breaking performance, hyper-linear scalability, and costs approaching the economics of hard-drive based systems. A VAST Cluster presents itself to client systems through industry standard protocols – NFS, NFS over RDMA, SMB, and S3, with support for a Kubernetes CSI in a container-driven world.

When we started to work with NVIDIA in early 2020, we quickly realized, along with NVIDIA GDS Engineering, that we were exposing ourselves as an RDMA target to the DGX-2 systems we had been testing. NVIDIA GDS engineers had already experimented with GDS working on NFS systems that support RDMA, and had been on the lookout for a commercial storage system that supported it. We were eager to test this, and astonishingly, VAST NFS over RDMA worked with GDS literally out of the box – we had usable results in a few hours with no modifications to our system.

This started a highly productive collaboration between us, and with some larger systems, we quickly showed that we can achieve line rate bandwidth with a DGX-2 system. Shown below in Figure 3 is the VAST UI delivering data to a DGX-2 system with 8 x 100 Gb HDR100 IB NICs, delivering a sustained 94+ GB/s 1 MB IO Size Read throughput (we have recorded as high as 98 GB/s). One of the key points to note here is that this throughput was delivered via a single NFSoRDMA mount point to a single client DGX-2 system, which is unprecedented for NFS.

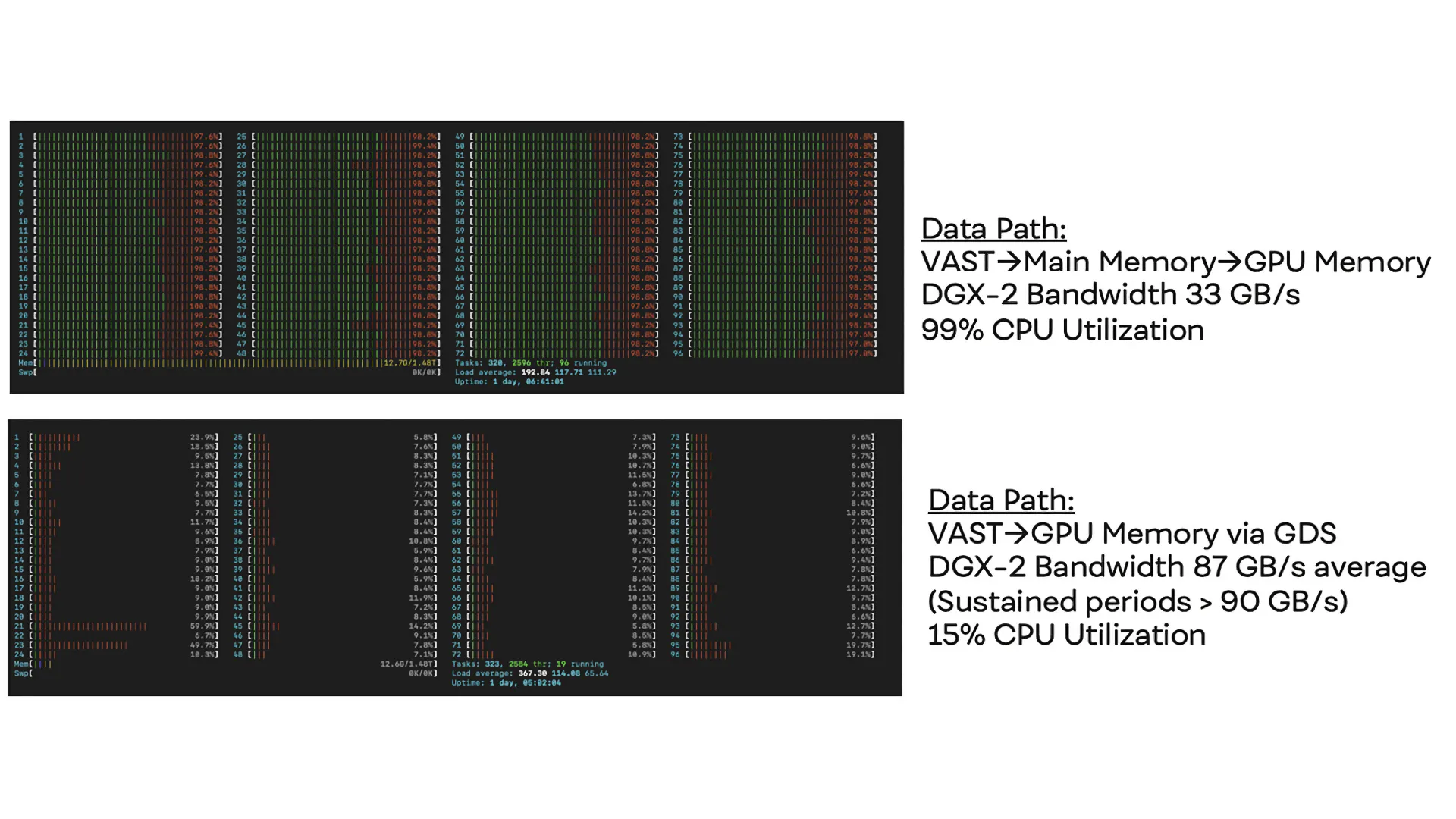

Probably even more interesting was the fact that the effect of bypassing CPU/SYSMEM was dramatic for the CPU utilization of the system. Figure 4 shows data moving to the GPU using the traditional path (as shown in Figure 1) and achieving no more than 33 GB/s before saturating the CPU. In contrast, in Figure 5, we get near the rate line bandwidth, but with only 15% CPU utilization as seen in htop.

The Next Chapter: GDS with the DGX A100

In the middle of 2020, NVIDIA announced a new DGX Platform based on the revolutionary A100 GPUs, an innovation that we eagerly awaited along with the rest of the industry. The system architecture used 8xA100 GPUs, AMD Epyc (Rome) processors, and 8 HDR 200 Gb NICs, with a theoretical line bandwidth of 200 GB/s on a single system – clearly a head-turner!

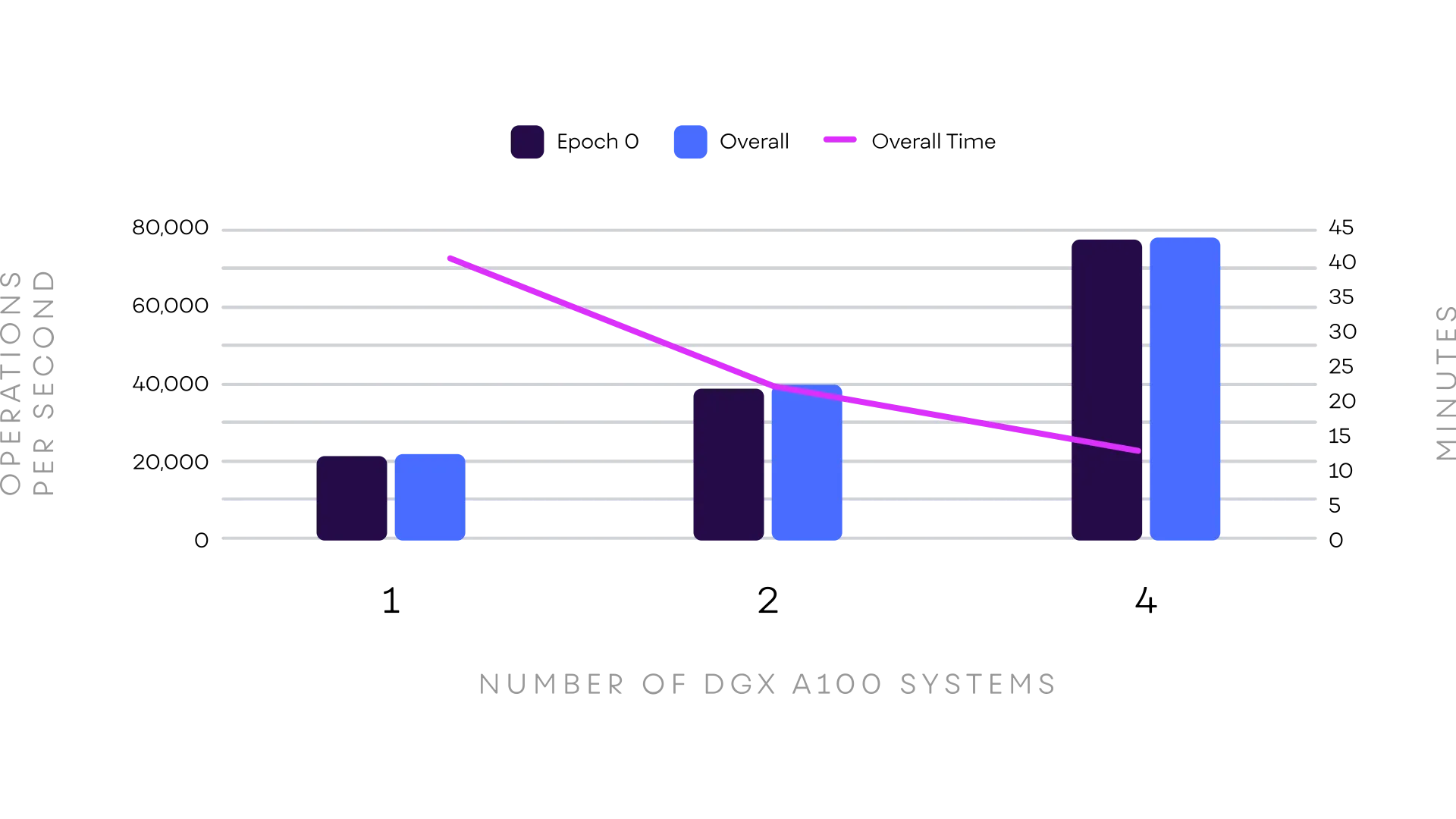

As GDS is not yet generally available (as of this writing), we also published a joint NVIDIA/VAST Reference Architecture for the DGX A100 systems without GDS. All the measurements were conducted by NVIDIA in their labs. The curious reader should download and study the details in this RA. To highlight some parts of it, the results from running MLPerf with 1 to 4 DGX A100s are shown below.

The MLPerf Training (v0.7) benchmark tests the well-known Resnet-50 Residual CNN image classification benchmark on the Imagenet dataset (typically run under MXNet, Tensorflow, or Pytorch), and the results we obtained show impressive linearity in scaling as well as state-of-the-art images/second. However, even more impressive is that Epoch 0 (the first training pass over the data), which typically is data straight from the storage subsystem, with nothing in the file system buffer cache, has a near-zero difference with the overall average for all Epochs. This shows that the VAST cluster performance is as good as subsequent Epochs where the data can come from system memory! Back to GDS on the DGX A100’s…

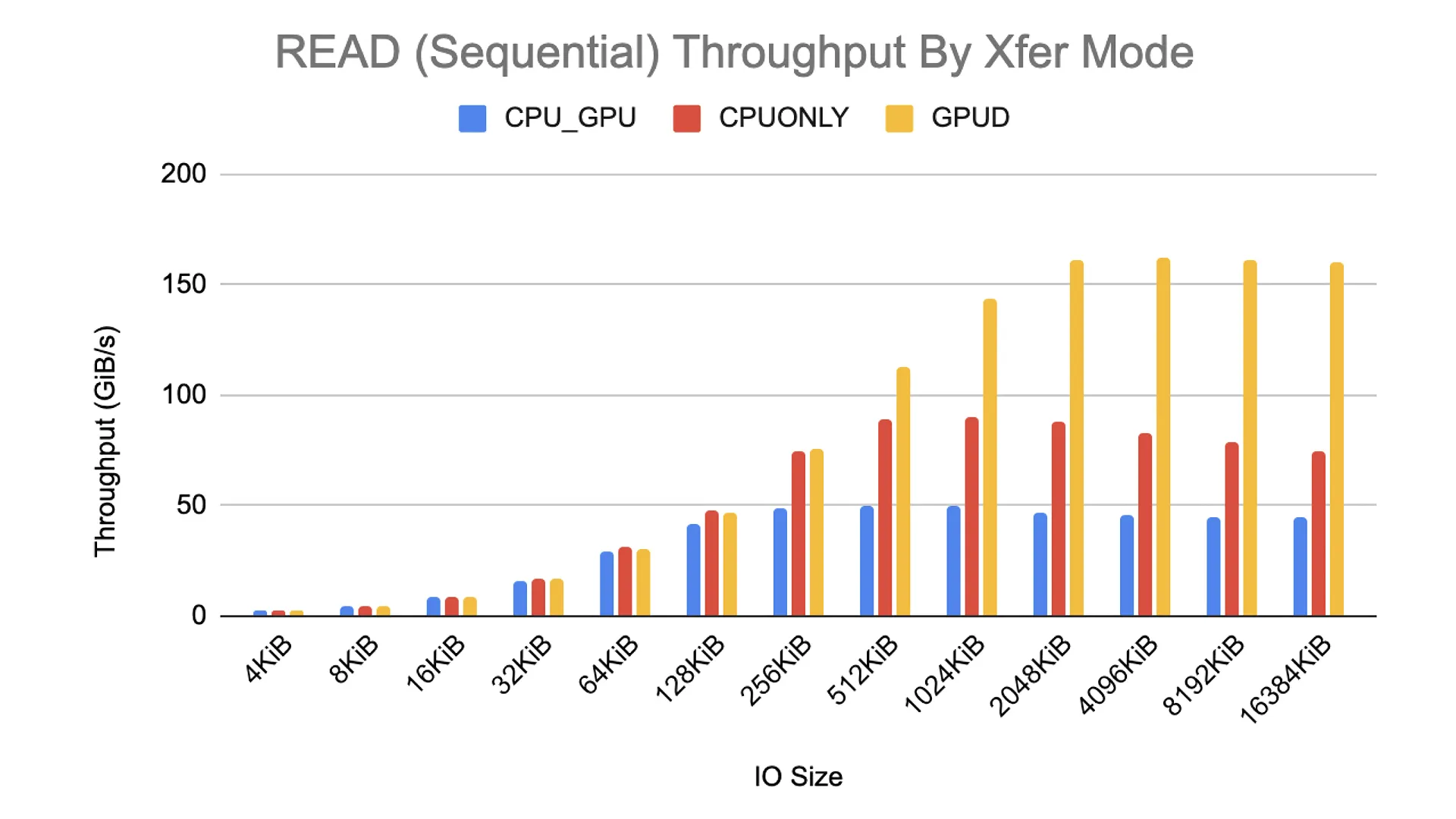

We were not disappointed when we had the opportunity to test GDS on a VAST Data Cluster against a DGX A100 server. We achieved a maximum of 162 GiB/s Read Throughput – which appears to be the maximum the system can deliver, fully saturating the capabilities of the DGX A100 system. The results below were from benchmarking performed by NVIDIA (not by VAST) and were shown and published at the NVIDIA GTC 2020 Fall Conference.

VAST Data was one of a few select vendors that supports GDS officially at launch by NVIDIA, which became generally available in 2021. More ecosystem work is expected to be completed in that time frame as well, with support for the NVIDIA cuFile APIs (necessary to exploit GDS capabilities) in major Deep Learning Frameworks such as Tensorflow, PyTorch, and MXNet, as well as exploration of new use cases for this work.

GPU Direct Use Cases in real life

NVIDIA did some stellar work integrating the GDS cuFile APIs into various pieces of the NVIDIA software portfolio, starting with NVIDIA Dali, an image augmentation library, with Python bindings. They also introduced GDS support for image loading in numpy format to allow for fast loading into GPU memory for inference. VAST Data (yours truly) presented (at Tech Field Day in 2023) a variety of live benchmarks for GDS and other real-life applications, notable among which was using the GDS numpy integration to accelerate the MLPerf DeepCam Climate Segmentation benchmark, as well as using the DALI integration to show how MRI images can be ingested faster with GDS. These results, and others, can be viewed on YouTube from that:

Since then, the use of GDS has made rapid strides with NVIDIA incorporating GDS support in its RAPIDS framework, with direct support for GDS.

GPU Direct Storage functionality on VAST is invoked by using the cuFile APIs from NVIDIA, which provide bindings in the C Language, and are part of CUDA from CUDA 11.4 onwards. One way to use GDS for applications is to directly write the appropriate cuFile function calls into custom CUDA code in the calling application. The implementation will depend on application details, but most CUDA developers will know how to proceed - the API specification is fairly straightforward.

However, in many cases, GDS is integrated with other frameworks from NVIDIA, allowing for more transparent access to its capabilities. Note that GDS is useful when the data are needed in the GPU VMEM with no requirements to do any CPU-based processing in SYSMEM. Typically, large datasets benefit from this significantly. There are a few broad areas where GDS works “out-of-the-box” without any need to write to the cuFILE APIs.

Using the NVIDIA RAPIDS framework, many components of RAPIDS support GDS either automatically or with some tweaking of configurations. Much of this is by using the KvikIO libraries and integrating this with various tooling for dataframes - cuDF, medical images - cuCIM, Machine Learning - cuML, Graphs - cugraph, etc. Many file types, such as Parquet, CSV, ORC, ZARR, etc., and multi-tiled Whole Slide Images (WSI) can be accessed using cuDF and cuSIM with significant performance gains using the RAPIDS framework.

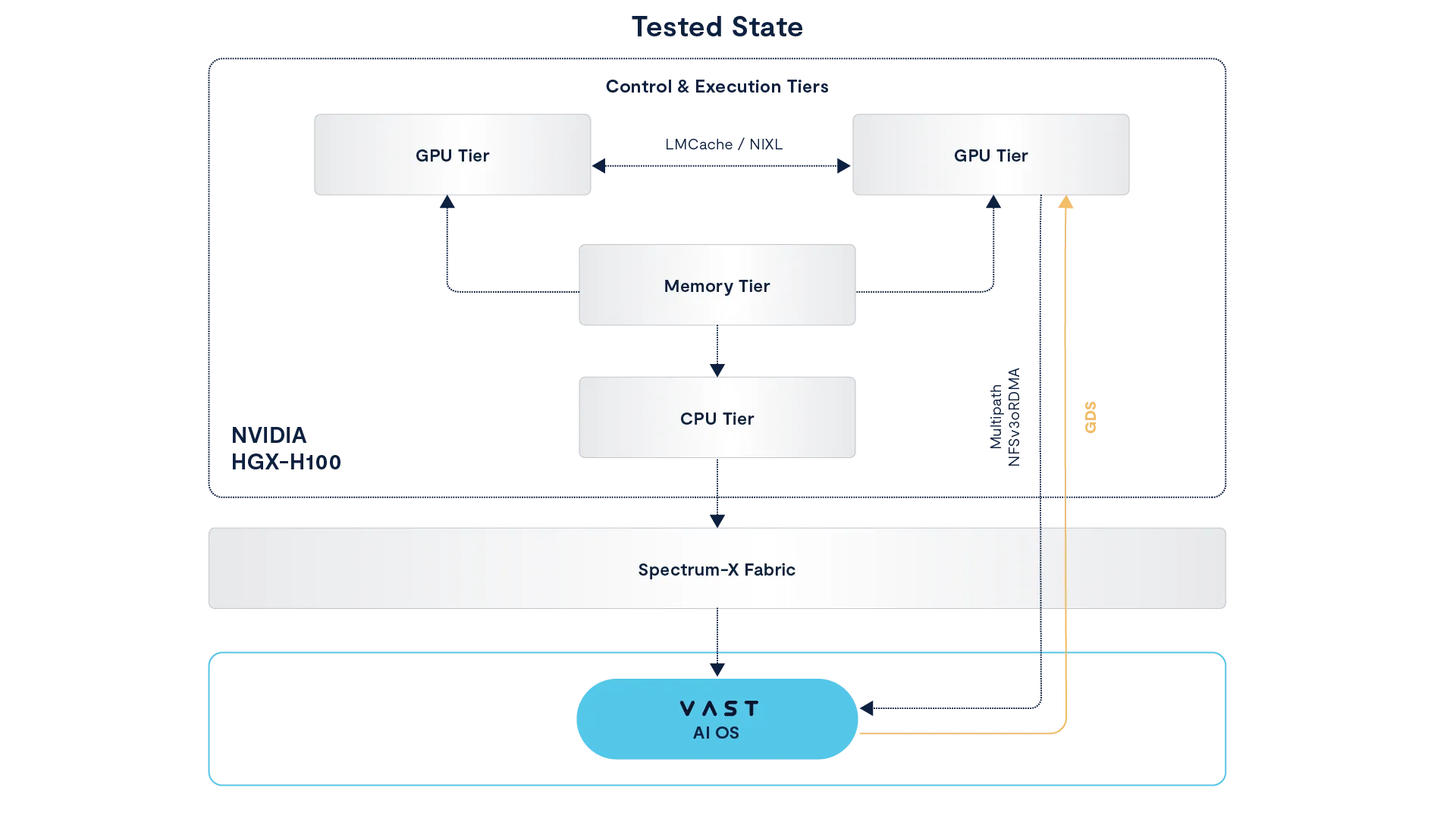

GDS can also be used to accelerate inference in frameworks such as vLLM with LMCache by providing acceleration to offload KV Cache to external GDS capable storage. VAST is a contributor to this effort in the LMCache project. This functionality can also be used to accelerate inference in the NVIDIA Dynamo LMCache integration framework.

The reader is encouraged to study the references provided in this section to determine where benefit could be derived.

The interest in this from our customer base is unprecedented. Many of our customers who have purchased DGX A100 systems are planning to use them with VAST systems, with or without GDS, as VAST Data offers a scalable, highly performant, and cost-effective platform to base their Deep Learning workloads on, without any compromises.