Today, it’s our extreme pleasure to announce a deep partnership with Lambda, focused on building data-intensive AI infrastructure powered by NVIDIA GPUs. Lambda, unlike many other AI cloud vendors, brings a somewhat unique twist to the business of AI that we think is special as organizations evolve their application strategy to incorporate either full-cloud or hybrid-cloud deep learning into their enterprise stack.

What’s a lambda?

Well, aside from being the Greek letter “L,” the term lambda has many definitions across many disciplines including computer programming, statistics, linguistics, etc. Since this is my blog post, I get to choose the connotation that works best for today’s story… so I choose the flavor of lambdas that are used in physics. In physics, lambdas (λ) often represent wavelengths — the distance between points in a periodic wave, such as lightwave or soundwave.

Back to Lambda (the company), not every customer wants to consume AI infrastructure in the exact same way as the others, and the spectrum of infrastructure decisions is deeply rooted in how they manage their data. It’s cliché, but data has gravity. The global race to deploy AI for LLMs, computer vision, etc, has also resulted in the creation of a new form of gravity, processor gravity.

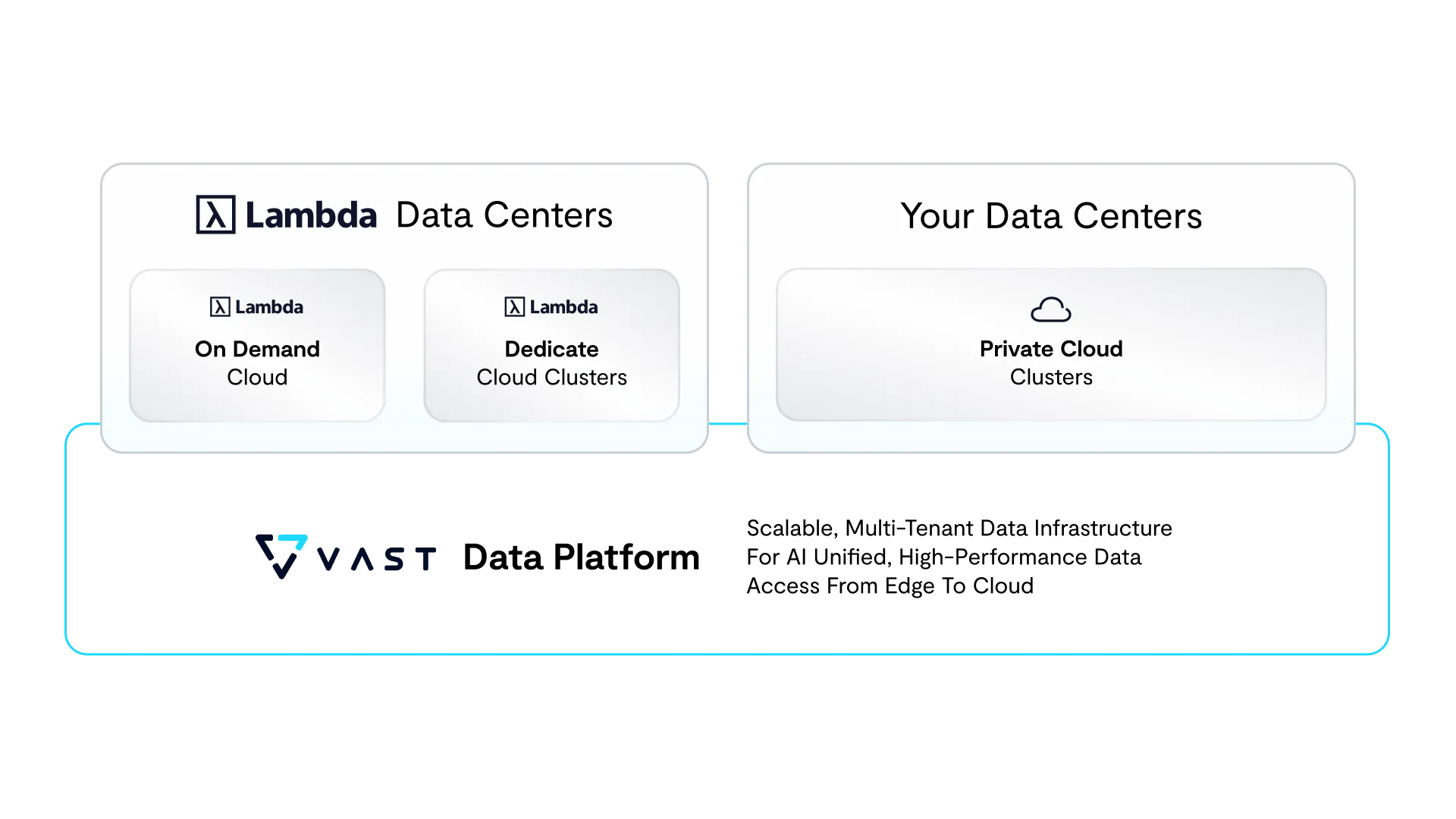

More than most, Lambda has built a business model that caters to the diverse needs of their customers with a product portfolio that includes a hyperscale AI cloud offering and a data center portfolio of NVIDIA GPUs, including NVIDIA H100 Tensor Core GPU clusters, which are designed to deliver an order-of-magnitude leap in performance and scalability to power AI workloads. What comes of this is a solution stack and common operating environment that can be deployed in your datacenter or theirs. Customers get the flexibility of choice, but more importantly Lambda is now a modern cloud provider that can extend its cloud (in essence) all the way into your data center.

So - back to wavelengths, what you have here is a spectrum of infrastructure choice, with the the ability to either start in the cloud or burst to an on-demand cloud when workloads dictate. If processors have a certain amount of gravity, the secure Lambda cloud allows customers to fight against this gravity by leveraging real-time hyper-optimized resources.

On the topic of data gravity, in August of this year, VAST announced the VAST DataSpace as part of our larger VAST Data Platform unveiling. The DataSpace is a unique approach to building globally distributed namespaces for your files, objects and tables where we’ve engineered a new approach to global data management that breaks the tradeoff of strict global consistency and fast access at the edge… now you can have both.

With these new AI clouds taking shape and asserting a dominant position in the market, as Lambda is doing, the interesting byproduct of the partnership between VAST and Lambda is the ability to extend DataSpaces between all of the locations that Lambda enables their customers to compute. If your data is no longer bound to a specific location, and your processors can now be flexibly deployed and scaled on demand… data gravity and processor gravity become a thing of the past. Wavelengths can be long, but our customers don’t need to struggle with the same effects of distance as they’ve experienced in the past.

We’re already working with several large Lambda customers who want to realize this vision of hybrid cloud AI data management, and we’re so excited to work with the Lambda team to provide a superior level of deployment choice… coupled with the flexibility, security, scale and efficiency that our mutual customers demand as they build data-fueled AI products and services.

Let me finish by thanking the whole Lambda team for their support and partnership.