Data streaming presents a lot of challenges. At scale these challenges compound, requiring heavy engineering effort to deal with each specific use for that data.

After you’ve stitched together the requisite software and infrastructure to accomplish your goals, you hope the goals don’t change and that the selected components continue to perform. This can be challenging because each component added to the system introduces compounding operational effort and fragility.

We’re solving this problem by building out the VAST AI OS to include the core components for modern data-intensive applications, from real-time packet analysis to multi-agent AI pipelines. We’ve added multiple data stores, serverless functions, and more, all running at extremely high performance thanks to our underlying storage architecture.

In this post, we’re focusing on one component that’s an integral part of any data pipeline: the event broker. Specifically, we’re digging into the performance of the Kafka-compatible VAST Event Broker.

VAST Event Broker vs. Apache Kafka and Redpanda

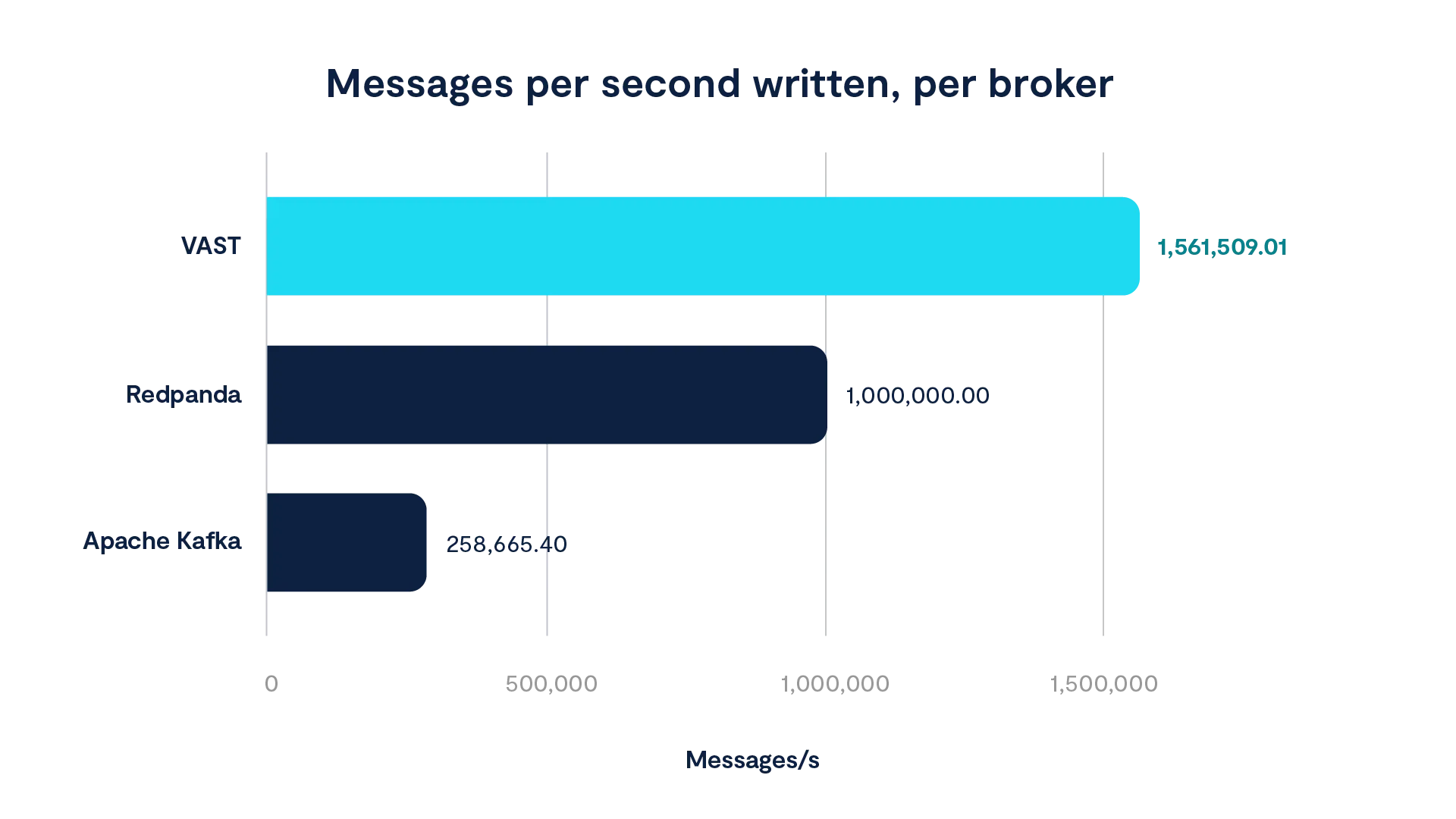

Because we’re talking about benchmarks, let’s start with some numbers:

The VAST Event Broker can sustain 604% more throughput than Apache Kafka.

A single VAST Event Broker node is 156% faster than Redpanda (according to their own published figures on similar infrastructure).

The VAST Event Broker was able to ingest 136 million messages per second at 99% efficiency across 88 nodes.

Of course, these abstract numbers don’t tell you much. So here’s some more context: We ran an OpenMessaging benchmark using a "throughput"-oriented Kafka driver on 3-node broker setups for VAST and Apache Kafka. Some workload details:

1 topic

1 KB messages

128 partitions

This test supplied us with the maximum ingress figures - on identical infrastructure - for Kafka and VAST. We tested Redpanda in-house on the same gear and did not achieve their published figures for this workload. We elected to use their own figures anyway.

As noted above, the results of this 3-broker benchmark showed VAST significantly outperforming both other options:

However, this is a 1:1 production/consumption ratio test, which is a bit misleading because, in the real world, that ratio is very likely to be higher on the consumption side. Increasing the read pull on a cluster will put downward pressure on ingress performance. So if you’re operating on the margins of your pub/sub system (as in this benchmark), things can get scary.

The story ends there for Redpanda and Kafka - size accordingly. But our Event Broker has more to say.

The DASE Architecture Fuels Performance

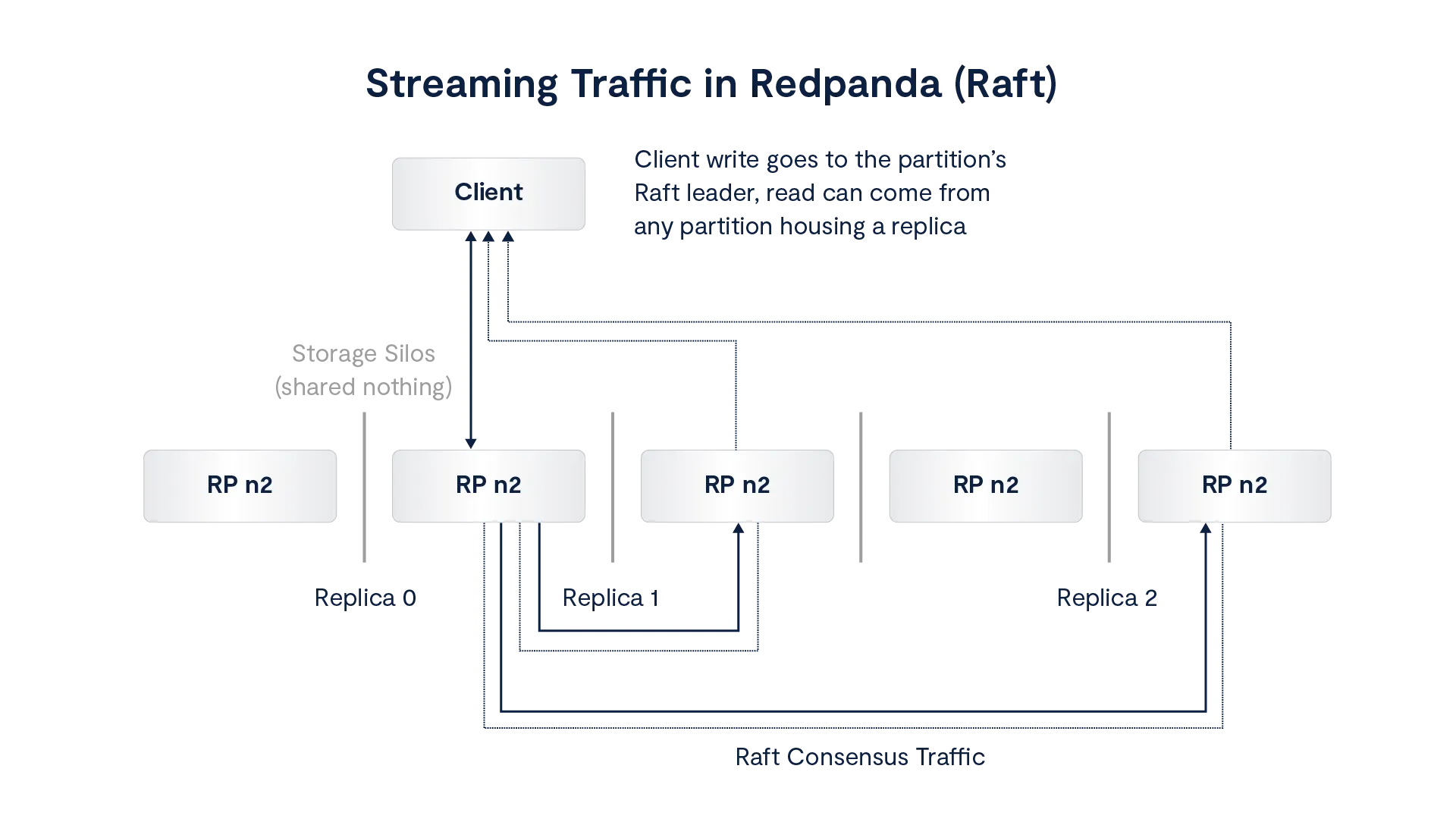

Redpanda and Apache Kafka versions take a shared-nothing approach to storing data. They focus on front-end software and use traditional replication and consensus algorithms to maintain resilience and availability. VAST leverages a sophisticated but highly streamlined approach to consensus and resilience: Disaggregated and Shared Everything (DASE).

This figure depicts a simplified view of the shared-nothing, Raft-based replication approach used by Redpanda. Apache Kafka uses Raft only for metadata management and Kafka’s In-Sync Replicas (ISR) model for data. It’s not depicted here because Redpanda’s is faster.

Writes are directed to the Kafka partition’s Raft leader (there is a Raft session per-partition) where that broker writes (at minimum) two additional copies of a data chunk to two other nodes. Fully replicated write amplification is part of the deal. Acknowledgements are sent back to the leader on successful writes and, once a minimum number of chunks are stored, the data is considered safe and coherent. Since this is a streaming platform, reads are happening very close in time to the writes, which incur checks of the data’s status over the Raft protocols, where misses are included in consensus traffic.

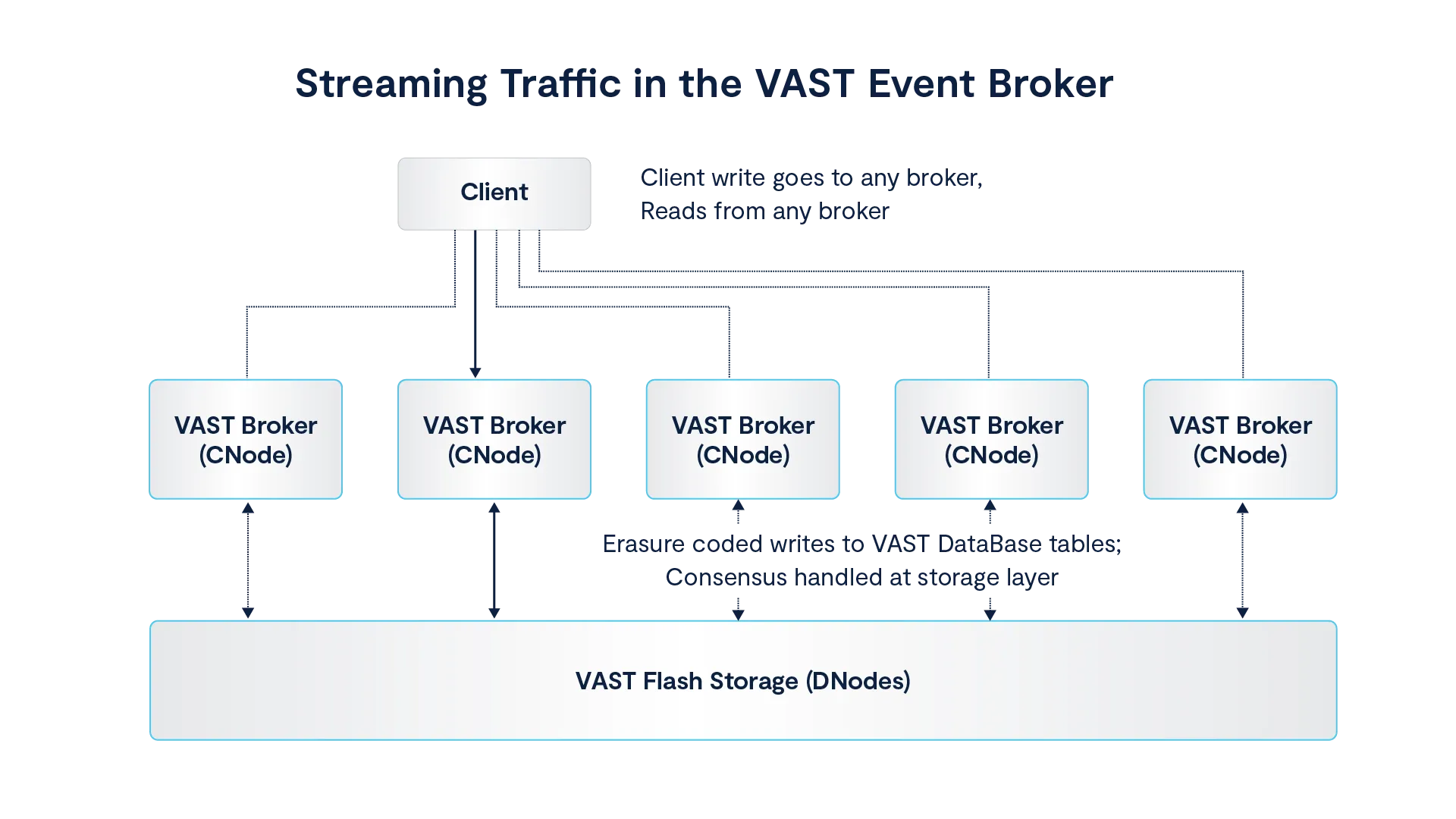

VAST is fundamentally different. Our approach is deceptively simple when visualized, but it is the result of sophisticated software design that merges modern networking and hardware capabilities to supply an end-to-end distributed system that “works” exactly like a single, infinitely scalable entity:

Erasure coding reduces write amplification from +200% to +3% while maintaining five nines of availability.

Consensus is handled on-disk in the metadata layer, eliminating the need for external, complex, and often fragile consensus algorithms. Ultra low-latency RDMA networking and storage-class memory (SCM) make this possible.

VAST operates without siloing data for safe replication arrangements, making capacity and performance expansions trivial.

What all of this means is that throughput isn’t constrained by anything but the aggregate of available CPUs in the system and networking bandwidth, and latency is reduced through the elimination of consensus traffic. Fundamentally, almost everything VAST is grounded in DASE.

I encourage you to read about it to understand the details of the architecture and how it has revolutionized infrastructure over the past five years.

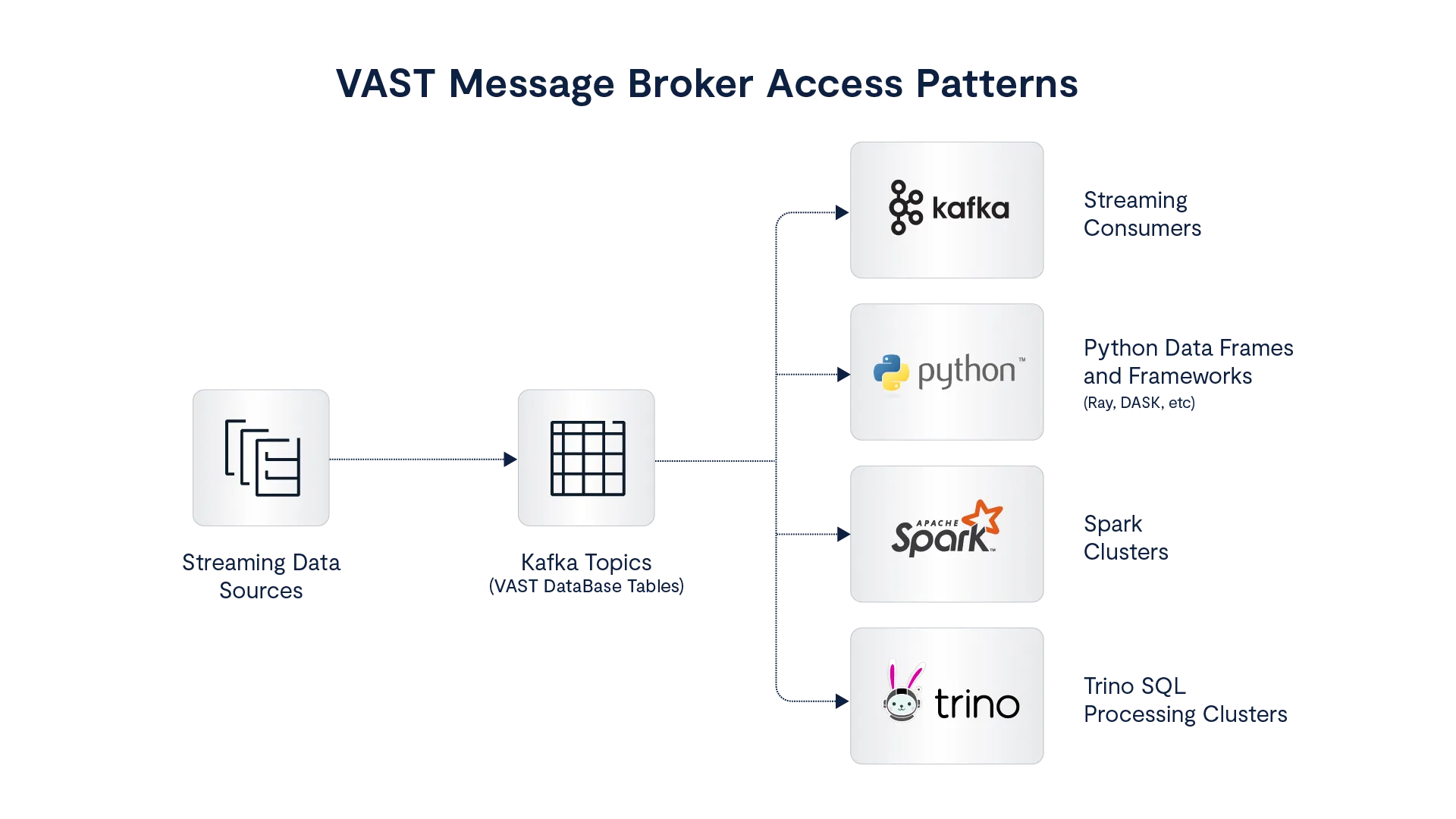

Tabular Access to Topics

You can read more about the VAST Event Broker features here, but the thing we’re focusing on now is how Event Broker topics are stored as VAST DataBase tables. Using a tabular backend for Kafka topics gives us a structured data interface to those topics. In this case, that interface can be Trino, Spark, and/or the greater Python ecosystem via the VAST DataBase SDK.

Rather than applications subscribing to topics over Kafka APIs and sorting through entire streams to down-select, or implementing complex pipelines with additional software like Flink or ksqlDB, clients can choose to consume real-time and historical data with a data-processing engine table-topics. This has a lot of advantages:

No limitations are imposed by the Kafka protocol itself; partition count is not a factor in consumer access. Even if the topic has only 1 partition, the entire cluster can be brought to bear on the topic through processing engines (Trino, Spark, Python SDK).

Current and historical data can be efficiently accessed and processed in real-time.

The tables themselves ensure high-availability, data resilience, and add the entire catalog of VAST’s governance, visibility, tenancy, and data protection features to the topics.

The DASE architecture handles low-latency consensus, avoiding discrete Zookeeper or KRaft instances.

This capability obviates the need for split architectures for housing wide time windows and separating real-time and historical data. Workloads like streaming ETL and rolling aggregations can be combined with full-blown analytical workloads with complex joins and full history processing.

To recap:

A single processing engine will have access to real-time and historical data in a single table, simplifying fraud and anomaly detection as well as deep analysis.

Topics are available in a structured format, making it easy to select relevant data out of a stream rather than subscribing to the entire contents of a topic.

Scaling to 136 Million Messages per Second

Moving away from the niceties of tabular access and pipeline complexity reduction, let’s return to raw performance. The obvious next question is: “Does it scale?” Yes, it does.

In fact, we achieve near-limitless linear scaling to handle I/O, compute, and pure capacity. This is the fundamental value of the DASE architecture. To prove this out, we took the above OpenMessaging benchmark using 1KB messages and scaled it to 88 broker nodes and thousands of partitions and producers.

Here’s the hardware setup:

VAST cluster with 88 AMD-based compute front-ends (98 threads, 382GB memory each)

62 JBOFs housing 1,364 NVMe devices and 496 SCM devices

12 client hosts running 120 OpenMessaging benchmark workers

200GbE networking throughout

Here’s the benchmark result:

3 Nodes | 88 Nodes | |

|---|---|---|

Throughput | 4.7 million msg/s | 136 million msg/s |

Scaling efficiency | 100% (baseline) | 99% |

Scaling is effectively linear using the per-node performance of a 3-node cluster as the baseline for 100% (single-node setups don’t incur penalties for consensus and replication in Kafka and Redpanda; VAST’s architecture obviates both of those factors but it does benefit from 100% cache hits in memory). The VAST Event Broker scales without rebalancing partitions or worrying about minimum replica counts - replicas are entirely ignored by VAST due to the erasure-coded backend.

Scaling and sizing for workload additions, expansions, and new use cases are easy and can be done with a confidence that is highly unusual in the world of data pipelines.

What If …?

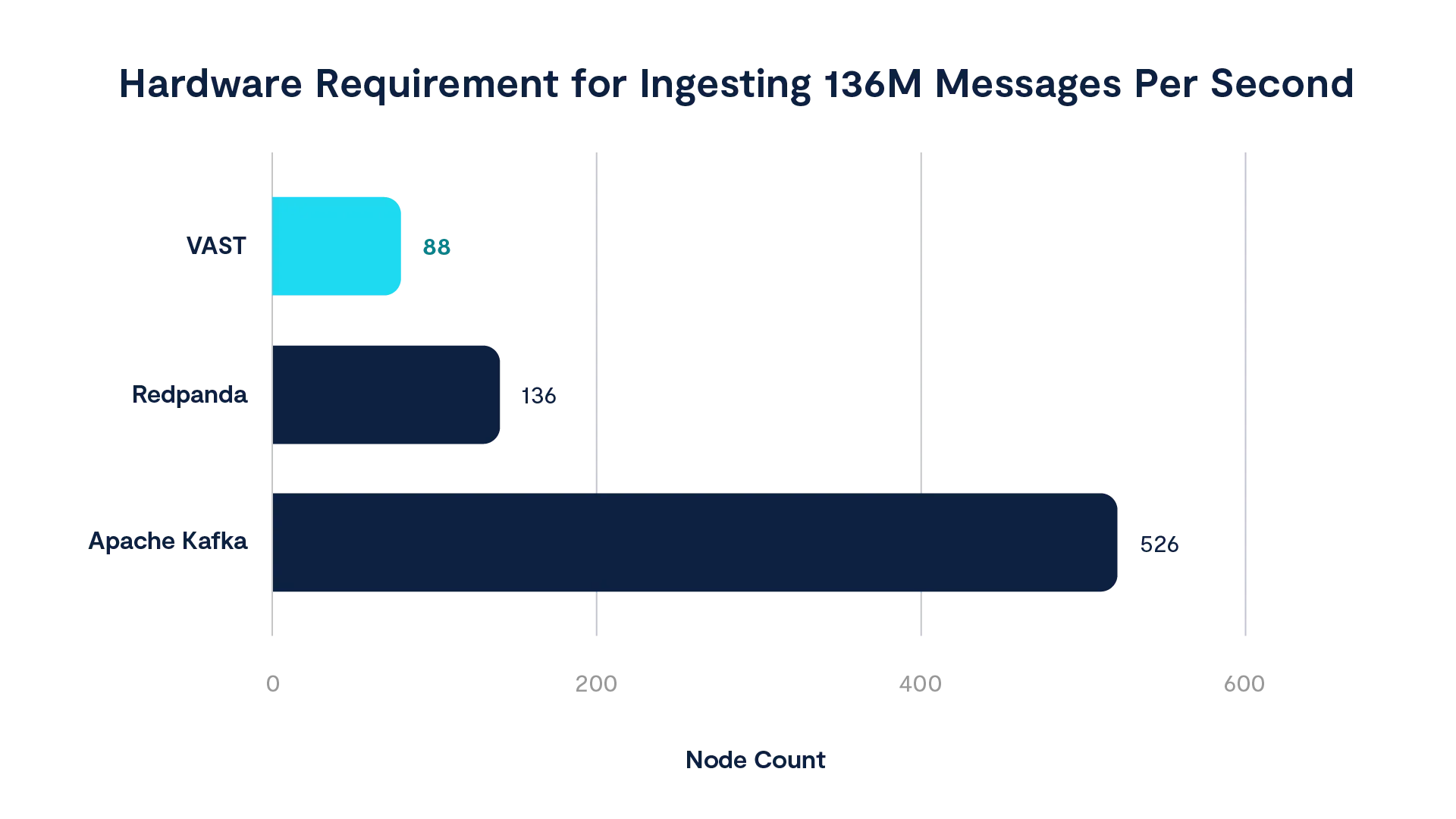

Assuming they scaled with 100% efficiency (an unsubstantiated assumption given the per-partition Raft overhead and backend network stress, but let’s do it anyway), achieving the same results with Redpanda or Apache Kafka would take 136 nodes or 526 nodes, respectively:

A VAST cluster with 136 brokers could drive more than 212 million messages per second. A VAST cluster with 526 VAST brokers would get you 821 million messages per second. To achieve a cool 1 billion messages per second (1 TB/s of bandwidth), you would need only 650 brokers. Achieving these types of results using the current generation of message brokers would require thousands of nodes - and probably not work.

Higher Performance, Lower TCO

The headline here is that, using VAST, you can reduce your event broker footprint by a third at bare minimum, probably a lot more. And that’s just when evaluating the raw performance of the protocol implementation.

But we’re trying to solve business problems. Add tabular access to topics using MPP engines, pipeline stage reduction, and the full feature-set behind the VAST AI OS, and our TCO is fractional compared to current complex pipeline implementations. This has always been a primary driver of technology decisions, and it will become even more pronounced as AI workloads continue to push performance requirements.

If you want to learn more about how the VAST Event Broker fits into our broader platform and dive into a popular and high-value use case, check out this walkthrough of our DataEngine platform for running serverless functions.